This post is part of our ongoing series on running MariaDB on Kubernetes. We’ve published a number of articles about running MariaDB on Kubernetes for specific platforms and for specific use cases. If you are looking for a specific Kubernetes platform, check out these related articles.

Running HA MariaDB on Google Kubernetes Engine (GKE)

Running HA MariaDB on Amazon Elastic Container Service for Kubernetes (EKS)

Running HA MariaDB on Red Hat OpenShift

Running HA MariaDB with Rancher Kubernetes Engine (RKE)

And now, onto the post…

We’ve been excited to partner with Microsoft, including enabling innovative customers like Beco to build an IoT cloud on Microsoft Azure. Working with the Azure Kubernetes Service (AKS) team and Brendan Burns, distinguished engineer at Microsoft, we’re excited to showcase how Portworx runs on AKS to provide seamless support for any Kubernetes customer. Brendan and Eric Han, our VP of Product, were both part of the original Kubernetes team at Google and it is exciting to watch Kubernetes mature and extend into the enterprise.

Today’s post will look at how to run a HA MariaDB database on Azure Kubernetes Service (AKS), a managed Kubernetes offering from Microsoft, which makes it easy to create, configure, and manage a cluster of virtual machines that are preconfigured to run containerized applications.

Portworx, is a cloud-native storage platform to run persistent workloads deployed on a variety of orchestration engines including Kubernetes. With Portworx, customers can manage the database of their choice on any infrastructure using any container scheduler. It provides a single data management layer for all stateful services, no matter where they run.

In summary, to run HA MariaDB on Azure you need to:

- Create an AKS cluster

- Provision storage nodes with Managed Disks in order to allow for compute nodes to scale independently of storage

- Install cloud native storage solution like Portworx as a DaemonSet on AKS

- Create storage class defining your storage requirements like replication factor, snapshot policy, and performance profile

- Deploy MariaDB using Kubernetes

- Test failover by killing or cordoning node in your cluster and confirming that data is still accessible

- Dynamically resize MariaDB volume

How to set up an AKS cluster

Portworx is fully supported on Azure Kubernetes Service. Run the following commands to configure a 3 node cluster in Europe West. More on Azure AKS is available here.

$ az group create --name px --location westeurope $ az aks install-cli $ az aks create --resource-group px --name pxdemo --node-count 3 --generate-ssh-keys $ az aks get-credentials --resource-group px --name pxdemo

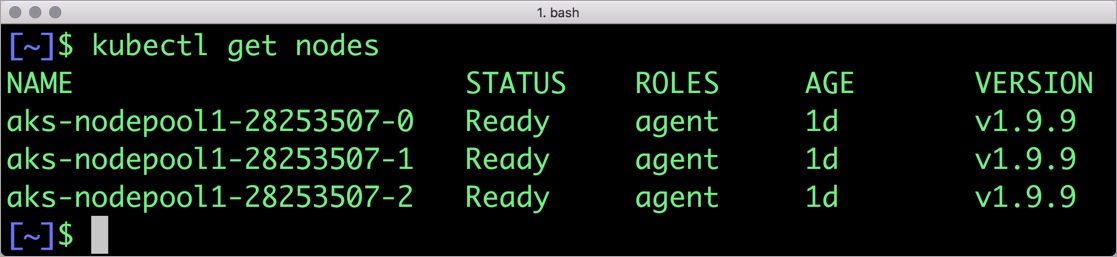

When the cluster is ready, verify it with the following command:

$ kubectl get nodes NAME STATUS ROLES AGE VERSION aks-nodepool1-28253507-0 Ready agent 1d v1.9.9 aks-nodepool1-28253507-1 Ready agent 1d v1.9.9 aks-nodepool1-28253507-2 Ready agent 1d v1.9.9

Provision Azure Storage Nodes

Running storage nodes separately from compute nodes will allow us to independently scale compute and storage resources. We use Portworx to manage the storage nodes and also access storage from the compute nodes.

Follow these steps to create three storage node VMs. Afterwards, we attach the Managed Disk to the storage nodes in the Azure portal using these instructions. Finally, we ssh into the VM and install Portworx on each of the storage nodes using the commands below.

latest_stable=$(curl -fsSL 'https://install.portworx.com/1.4/?type=dock&stork=false' | awk '/image: / {print $2}')

# Download OCI bits (reminder, you will still need to run `px-runc install ..` after this step)

sudo docker run --entrypoint /runc-entry-point.sh \

--rm -i --privileged=true \

-v /opt/pwx:/opt/pwx -v /etc/pwx:/etc/pwx \

$latest_stable

# Basic installation where

sudo /opt/pwx/bin/px-runc install -c CLUSTER-NAME \

-k etcd://[etcd-service]:2379 \

-a -f

# Reload systemd configurations, enable and start Portworx service

sudo systemctl daemon-reload

sudo systemctl enable portworx

sudo systemctl start portworx

For the CLUSTER-NAME, use the same string in each of your installs for that cluster.

Installing Portworx in AKS

Installing Portworx on Azure Kubernetes Service is not very different from installing it on a Kubernetes cluster setup through Kops. Portworx AKS documentation has the steps involved in running the Portworx cluster in a Kubernetes environment deployed in Azure.

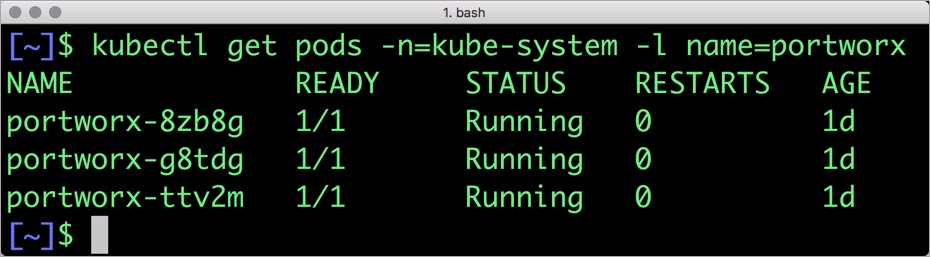

Portworx cluster needs to be up and running on AKS before proceeding to the next step. The kube-system namespace should have the Portoworx pods in running state.

$ kubectl get pods -n=kube-system -l name=portworx NAME READY STATUS RESTARTS AGE portworx-8zb8g 1/1 Running 0 1d portworx-g8tdg 1/1 Running 0 1d portworx-ttv2m 1/1 Running 0 1d

Creating a storage class for MariaDB

Once the AKS cluster is up and running, and Portworx is installed and configured, we will deploy a highly available MariaDB database.

Through storage class objects, an admin can define different classes of Portworx volumes that are offered in a cluster. These classes will be used during the dynamic provisioning of volumes. The Storage Class defines the replication factor, I/O profile (e.g., for a database or a CMS), and priority (e.g., SSD or HDD). These parameters impact the availability and throughput of workloads and can be specified for each volume. This is important because a production database will have different requirements than a development Jenkins cluster.

In this example, the storage class that we deploy has a replication factor of 3 with I/O profile set to “db,” and priority set to “high.” This means that the storage will be optimized for low latency database workloads like MariaDB and automatically placed on the highest performance storage available in the cluster. Notice that we also mention the filesystem, xfs in the storage class.

$ cat > px-mariadb-sc.yaml << EOF

kind: StorageClass

apiVersion: storage.k8s.io/v1beta1

metadata:

name: px-ha-sc

provisioner: kubernetes.io/portworx-volume

parameters:

repl: "3"

io_profile: "db_remote"

priority_io: "high"

fs: "xfs"

EOF

$ kubectl create -f px-mariadb-sc.yaml storageclass.storage.k8s.io "px-ha-sc" created $ kubectl get sc NAME PROVISIONER AGE px-ha-sc kubernetes.io/portworx-volume 10s stork-snapshot-sc stork-snapshot 3d

Creating a MariaDB PVC on Kubernetes

We can now create a Persistent Volume Claim (PVC) based on the Storage Class. Thanks to dynamic provisioning, the claims will be created without explicitly provisioning Persistent Volume (PV).

$ cat > px-mariadb-pvc.yaml << EOF

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: px-mariadb-pvc

annotations:

volume.beta.kubernetes.io/storage-class: px-ha-sc

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 1Gi

EOF

$ kubectl create -f px-mariadb-pvc.yaml

persistentvolumeclaim "px-mariadb-pvc" created

$ kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

px-mariadb-pvc Bound pvc-d70785ca-9a86-11e8-a409-1a6d80f90d97 1Gi RWO px-ha-sc 8s

Deploying MariaDB on AKS

Finally, let’s create a MariaDB instance as a Kubernetes deployment object. For simplicity’s sake, we will just be deploying a single MariaDB pod. Because Portworx provides synchronous replication for High Availability, a single MariaDB instance might be the best deployment option for your MariaDB database. Portworx can also provide backing volumes for multi-node MariaDB cluster. The choice is yours.

$ cat > px-mariadb-app.yaml << EOF

apiVersion: apps/v1

kind: Deployment

metadata:

name: mariadb

spec:

selector:

matchLabels:

app: mariadb

strategy:

rollingUpdate:

maxSurge: 1

maxUnavailable: 1

type: RollingUpdate

replicas: 1

template:

metadata:

labels:

app: mariadb

spec:

schedulerName: stork

containers:

- name: mariadb

image: mariadb:latest

imagePullPolicy: "Always"

env:

- name: MYSQL_ROOT_PASSWORD

value: password

ports:

- containerPort: 3306

volumeMounts:

- mountPath: /var/lib/mysql

name: mariadb-data

volumes:

- name: mariadb-data

persistentVolumeClaim:

claimName: px-mariadb-pvc

EOF

$ kubectl create -f px-mariadb-app.yaml deployment.extensions "mariadb" created

The MariaDB deployment defined above is explicitly associated with the PVC, px-mariadb-pvc created in the previous step.

This deployment creates a single pod running MariaDB backed by Portworx.

$ kubectl get pods NAME READY STATUS RESTARTS AGE mariadb-dff54d66d-m9r6q 1/1 Running 0 6s

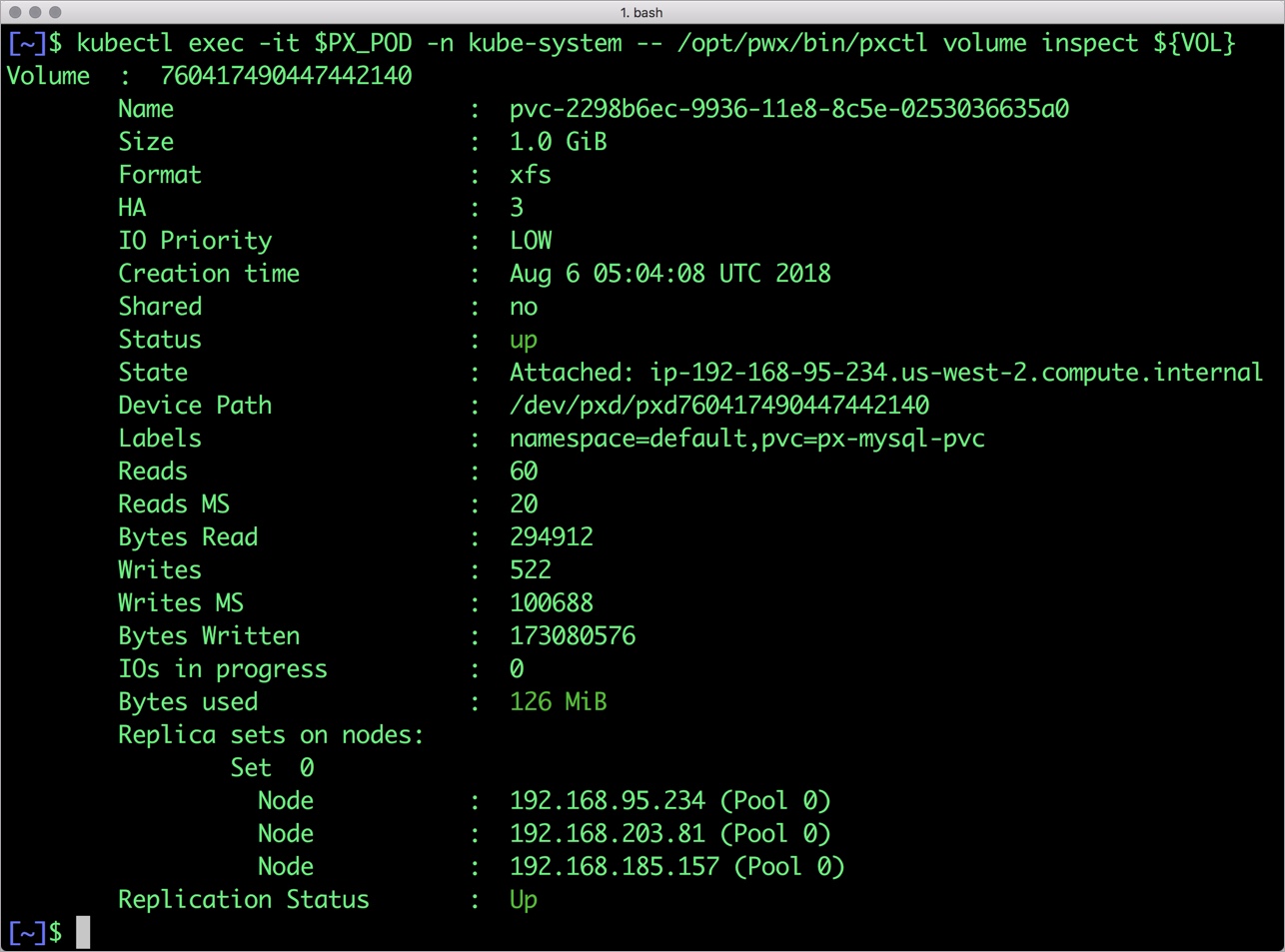

We can inspect the Portworx volume by accessing the pxctl tool running with the MariaDB pod.

$ VOL=`kubectl get pvc | grep px-mariadb-pvc | awk '{print $3}'`

$ PX_POD=$(kubectl get pods -l name=portworx -n kube-system -o jsonpath='{.items[0].metadata.name}')

$ kubectl exec -it $PX_POD -n kube-system -- /opt/pwx/bin/pxctl volume inspect ${VOL}

Volume : 472432194854453148

Name : pvc-d70785ca-9a86-11e8-a409-1a6d80f90d97

Size : 1.0 GiB

Format : xfs

HA : 3

IO Priority : LOW

Creation time : Aug 7 21:14:20 UTC 2018

Shared : no

Status : up

State : Attached: aks-nodepool1-28253507-2 (10.240.0.6)

Device Path : /dev/pxd/pxd472432194854453148

Labels : namespace=default,pvc=px-mariadb-pvc

Reads : 63

Reads MS : 132

Bytes Read : 319488

Writes : 505

Writes MS : 58584

Bytes Written : 166674432

IOs in progress : 0

Bytes used : 121 MiB

Replica sets on nodes:

Set 0

Node : 10.240.0.5 (Pool 0)

Node : 10.240.0.4 (Pool 0)

Node : 10.240.0.6 (Pool 0)

Replication Status : Up

Volume consumers :

- Name : mariadb-654cc68f68-khnpq (f65339c6-9a86-11e8-a409-1a6d80f90d97) (Pod)

Namespace : default

Running on : aks-nodepool1-28253507-2

Controlled by : mariadb-654cc68f68 (ReplicaSet)

The screenshot looks similar to the one shown below:

The output from the above command confirms the creation of volumes that are backing MariaDB database instance.

Failing over MariaDB pod on Kubernetes

Populating sample data

Let’s populate the database with some sample data.

We will first find the pod that’s running MariaDB to access the shell.

$ POD=`kubectl get pods -l app=mariadb | grep Running | grep 1/1 | awk '{print $1}'`

$ kubectl exec -it $POD -- mysql -uroot -ppassword

Welcome to the MariaDB monitor. Commands end with ; or \g.

Your MariaDB connection id is 11

Server version: 10.4.6-MariaDB-1:10.4.6+maria~bionic mariadb.org binary distribution

Copyright (c) 2000, 2018, Oracle, MariaDB Corporation Ab and others.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

MariaDB [(none)]>

Now that we are inside the shell, we can populate create a sample database and table.

MariaDB> CREATE DATABASE `classicmodels`;

MariaDB> USE `classicmodels`;

MariaDB> CREATE TABLE `offices` (

`officeCode` varchar(10) NOT NULL,

`city` varchar(50) NOT NULL,

`phone` varchar(50) NOT NULL,

`addressLine1` varchar(50) NOT NULL,

`addressLine2` varchar(50) DEFAULT NULL,

`state` varchar(50) DEFAULT NULL,

`country` varchar(50) NOT NULL,

`postalCode` varchar(15) NOT NULL,

`territory` varchar(10) NOT NULL,

PRIMARY KEY (`officeCode`)

) ENGINE=InnoDB DEFAULT CHARSET=latin1;

Query OK, 0 rows affected (0.227 sec)

MariaDB> insert into `offices`(`officeCode`,`city`,`phone`,`addressLine1`,`addressLine2`,`state`,`country`,`postalCode`,`territory`) values

('1','San Francisco','+1 650 219 4782','100 Market Street','Suite 300','CA','USA','94080','NA'),

('2','Boston','+1 215 837 0825','1550 Court Place','Suite 102','MA','USA','02107','NA'),

('3','NYC','+1 212 555 3000','523 East 53rd Street','apt. 5A','NY','USA','10022','NA'),

('4','Paris','+33 14 723 4404','43 Rue Jouffroy D\'abbans',NULL,NULL,'France','75017','EMEA'),

('5','Tokyo','+81 33 224 5000','4-1 Kioicho',NULL,'Chiyoda-Ku','Japan','102-8578','Japan'),

('6','Sydney','+61 2 9264 2451','5-11 Wentworth Avenue','Floor #2',NULL,'Australia','NSW 2010','APAC'),

('7','London','+44 20 7877 2041','25 Old Broad Street','Level 7',NULL,'UK','EC2N 1HN','EMEA');

Query OK, 7 rows affected (0.039 sec)

Records: 7 Duplicates: 0 Warnings: 0

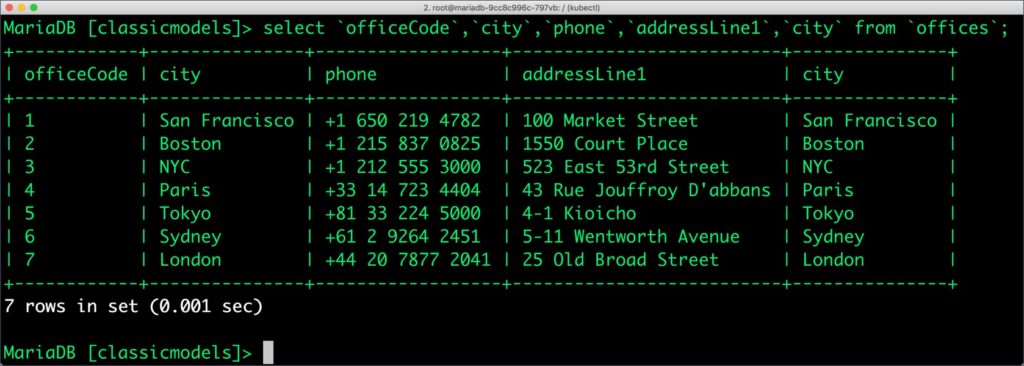

Let’s run a few queries on the table.

MariaDB> select `officeCode`,`city`,`phone`,`addressLine1`,`city` from `offices`; +------------+---------------+------------------+--------------------------+---------------+ | officeCode | city | phone | addressLine1 | city | +------------+---------------+------------------+--------------------------+---------------+ | 1 | San Francisco | +1 650 219 4782 | 100 Market Street | San Francisco | | 2 | Boston | +1 215 837 0825 | 1550 Court Place | Boston | | 3 | NYC | +1 212 555 3000 | 523 East 53rd Street | NYC | | 4 | Paris | +33 14 723 4404 | 43 Rue Jouffroy D'abbans | Paris | | 5 | Tokyo | +81 33 224 5000 | 4-1 Kioicho | Tokyo | | 6 | Sydney | +61 2 9264 2451 | 5-11 Wentworth Avenue | Sydney | | 7 | London | +44 20 7877 2041 | 25 Old Broad Street | London | +------------+---------------+------------------+--------------------------+---------------+ 7 rows in set (0.01 sec)

Find all the offices in USA.

MariaDB [classicmodels]> select `officeCode`, `city`, `phone` from `offices` where `country` = "USA"; +------------+---------------+-----------------+ | officeCode | city | phone | +------------+---------------+-----------------+ | 1 | San Francisco | +1 650 219 4782 | | 2 | Boston | +1 215 837 0825 | | 3 | NYC | +1 212 555 3000 | +------------+---------------+-----------------+ 3 rows in set (0.00 sec)

Exit from the MariaDB shell to return to the host.

Simulating node failure

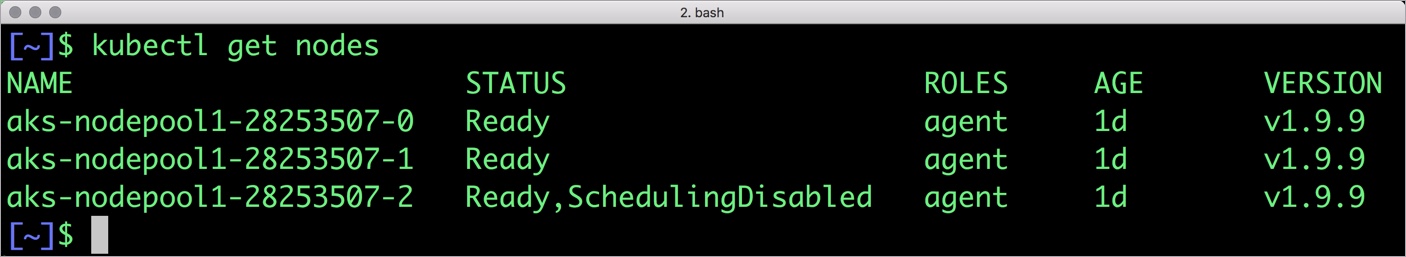

Now, let’s simulate the node failure by cordoning off the node on which MariaDB is running.

$ NODE=`kubectl get pods -l app=mariadb -o wide | grep -v NAME | awk '{print $7}'`

$ kubectl cordon ${NODE}

node "aks-nodepool1-28253507-2" cordoned

The above command disabled scheduling on one of the nodes.

$ kubectl get nodes NAME STATUS ROLES AGE VERSION aks-nodepool1-28253507-0 Ready agent 1d v1.9.9 aks-nodepool1-28253507-1 Ready agent 1d v1.9.9 aks-nodepool1-28253507-2 Ready,SchedulingDisabled agent 1d v1.9.9 s

Now, let’s go ahead and delete the MariaDB pod.

$ POD=`kubectl get pods -l app=mariadb -o wide | grep -v NAME | awk '{print $1}'`

$ kubectl delete pod ${POD}

pod "mariadb-dff54d66d-m9r6q" deleted

As soon as the pod is deleted, it is relocated to the node with the replicated data. Storage Orchestrator for Kubernetes (STORK), a Portworx-contributed open source storage scheduler, ensures that the pod is co-located on the exact node where the data is stored. It ensures that an appropriate node is selected for scheduling the pod.

Let’s verify this by running the below command. We will notice that a new pod has been created and scheduled in a different node.

$ kubectl get pods -l app=mariadb -o wide NAME READY STATUS RESTARTS AGE IP NODE mariadb-dff54d66d-tzvjw 1/1 Running 0 15s 192.168.86.169 ip-192-168-95-234.us-west-2.compute.internal

$ kubectl uncordon ${NODE}

node "aks-nodepool1-28253507-2" uncordoned

Finally, let’s verify that the data is still available.

Let’s find the pod name and run the ‘exec’ command, and then access the MariaDB shell.

kubectl exec -it $POD -- mysql -uroot -ppassword Welcome to the MariaDB monitor. Commands end with ; or \g. Your MariaDB connection id is 8 Server version: 10.4.6-MariaDB-1:10.4.6+maria~bionic mariadb.org binary distribution Copyright (c) 2000, 2018, Oracle, MariaDB Corporation Ab and others. Type 'help;' or '\h' for help. Type '\c' to clear the current input statement. MariaDB [(none)]>

We will query the database to verify that the data is intact.

MariaDB [none]> USE `classicmodels`; MariaDB [classicmodels]> select `officeCode`, `city`, `phone` from `offices` where `country` = "USA"; +------------+---------------+-----------------+ | officeCode | city | phone | +------------+---------------+-----------------+ | 1 | San Francisco | +1 650 219 4782 | | 2 | Boston | +1 215 837 0825 | | 3 | NYC | +1 212 555 3000 | +------------+---------------+-----------------+ 3 rows in set (0.00 sec)

Observe that the database table is still there and all the content is intact! Exit from the client shell to return to the host.

Performing Storage Operations on MariaDB

After testing end-to-end failover of the database, let’s perform StorageOps on our AKS cluster.

Expanding the Kubernetes Volume with no downtime

Currently the Portworx volume that we created at the beginning is of 1Gib size. We will now expand it to double the storage capacity.

First, let’s get the volume name and inspect it through the pxctl tool.

If you have access, SSH into one of the nodes and run the following command.

$ POD=`/opt/pwx/bin/pxctl volume list --label pvc=px-mariadb-pvc | grep -v ID | awk '{print $1}'`

$ /opt/pwx/bin/pxctl v i $POD

Volume : 472432194854453148

Name : pvc-d70785ca-9a86-11e8-a409-1a6d80f90d97

Size : 1.0 GiB

Format : xfs

HA : 3

IO Priority : LOW

Creation time : Aug 7 21:14:20 UTC 2018

Shared : no

Status : up

State : Attached: aks-nodepool1-28253507-2 (10.240.0.6)

Device Path : /dev/pxd/pxd472432194854453148

Labels : namespace=default,pvc=px-mariadb-pvc

Reads : 63

Reads MS : 132

Bytes Read : 319488

Writes : 557

Writes MS : 59076

Bytes Written : 175054848

IOs in progress : 0

Bytes used : 126 MiB

Replica sets on nodes:

Set 0

Node : 10.240.0.5 (Pool 0)

Node : 10.240.0.4 (Pool 0)

Node : 10.240.0.6 (Pool 0)

Replication Status : Up

Volume consumers :

- Name : mariadb-654cc68f68-khnpq (f65339c6-9a86-11e8-a409-1a6d80f90d97) (Pod)

Namespace : default

Running on : aks-nodepool1-28253507-2

Controlled by : mariadb-654cc68f68 (ReplicaSet)

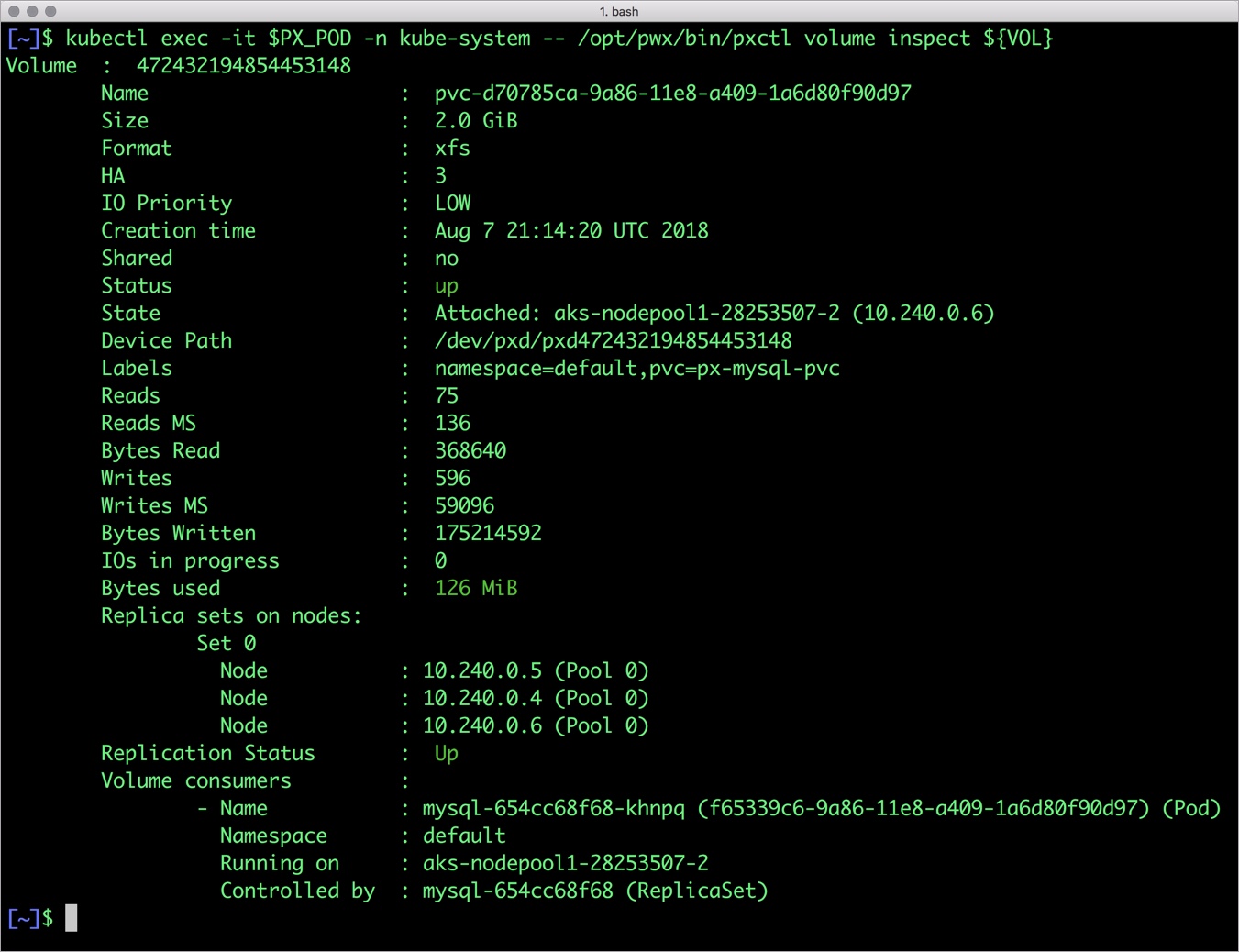

Notice the current Portworx volume. It is 1GiB. Let’s expand it to 2GiB.

$ /opt/pwx/bin/pxctl volume update $POD --size=2 Update Volume: Volume update successful for volume 472432194854453148

Check the new volume size.

$ /opt/pwx/bin/pxctl v i $POD Volume : 472432194854453148 Name : pvc-d70785ca-9a86-11e8-a409-1a6d80f90d97 Size : 2.0 GiB Format : xfs HA : 3 IO Priority : LOW Creation time : Aug 7 21:14:20 UTC 2018 Shared : no Status : up State : Attached: aks-nodepool1-28253507-2 (10.240.0.6) Device Path : /dev/pxd/pxd472432194854453148 Labels : namespace=default,pvc=px-mariadb-pvc Reads : 75 Reads MS : 136 Bytes Read : 368640 Writes : 596 Writes MS : 59096 Bytes Written : 175214592 IOs in progress : 0 Bytes used : 126 MiB Replica sets on nodes: Set 0 Node : 10.240.0.5 (Pool 0) Node : 10.240.0.4 (Pool 0) Node : 10.240.0.6 (Pool 0) Replication Status : Up Volume consumers : - Name : mariadb-654cc68f68-khnpq (f65339c6-9a86-11e8-a409-1a6d80f90d97) (Pod) Namespace : default Running on : aks-nodepool1-28253507-2 Controlled by : mariadb-654cc68f68 (ReplicaSet)

The screenshot looks similar to the one shown below:

Taking Snapshots of a Kubernetes volume and restoring the database

Portworx supports creating snapshots for Kubernetes PVCs.

Let’s create a snapshot for the Kubernetes PVC we created for MariaDB.

cat > px-mariadb-snap.yaml << EOF apiVersion: volumesnapshot.external-storage.k8s.io/v1 kind: VolumeSnapshot metadata: name: px-mariadb-snapshot namespace: default spec: persistentVolumeClaimName: px-mariadb-pvc EOF

$ kubectl create -f px-mariadb-snap.yaml volumesnapshot.volumesnapshot.external-storage.k8s.io "px-mariadb-snapshot" created

Verify the creation of volume snapshot.

$ kubectl get volumesnapshot NAME AGE px-mariadb-snapshot 13s

$ kubectl get volumesnapshotdatas NAME AGE k8s-volume-snapshot-504e9e5f-a6ec-11e9-ab32-7a5327be2608 19s

With the snapshot in place, let’s go ahead and delete the database.

$ POD=`kubectl get pods -l app=mariadb | grep Running | grep 1/1 | awk '{print $1}'`

$ kubectl exec -it $POD -- mysql -uroot -ppassword

Welcome to the MariaDB monitor. Commands end with ; or \g.

Your MariaDB connection id is 9

Server version: 10.4.6-MariaDB-1:10.4.6+maria~bionic mariadb.org binary distribution

Copyright (c) 2000, 2018, Oracle, MariaDB Corporation Ab and others.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

MariaDB [(none)]>

drop database classicmodels;

Since snapshots are just like volumes, we can use it to start a new instance of MariaDB. Let’s create a new instance of MariaDB by restoring the snapshot data.

$ cat > px-mariadb-snap-pvc << EOF

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: px-mariadb-snap-clone

annotations:

snapshot.alpha.kubernetes.io/snapshot: px-mariadb-snapshot

spec:

accessModes:

- ReadWriteOnce

storageClassName: stork-snapshot-sc

resources:

requests:

storage: 2Gi

EOF

$ kubectl create -f px-mariadb-snap-pvc.yaml

persistentvolumeclaim "px-mariadb-snap-clone" created

From the new PVC, we will create a MariaDB pod.

$ cat < px-mariadb-snap-restore.yaml >> EOF

apiVersion: apps/v1

kind: Deployment

metadata:

name: mariadb

spec:

selector:

matchLabels:

app: mariadb

strategy:

rollingUpdate:

maxSurge: 1

maxUnavailable: 1

type: RollingUpdate

replicas: 1

template:

metadata:

labels:

app: mariadb-snap

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: px/running

operator: NotIn

values:

- "false"

- key: px/enabled

operator: NotIn

values:

- "false"

spec:

containers:

- name: mariadb

image: mariadb:latest

imagePullPolicy: "Always"

env:

- name: MYSQL_ROOT_PASSWORD

value: password

ports:

- containerPort: 3306

volumeMounts:

- mountPath: /var/lib/mysql

name: mariadb-data

volumes:

- name: mariadb-data

persistentVolumeClaim:

claimName: px-mariadb-snap-clone

EOF

$ kubectl create -f px-mariadb-snap-restore.yaml deployment.extensions "mariadb-snap" created

Verify that the new pod is in the running state.

$ kubectl get pods -l app=mariadb-snap NAME READY STATUS RESTARTS AGE mariadb-snap-655ffd9d67-ff288 1/1 Running 0 15s

Finally, let’s access the sample data created earlier in the walkthrough.

$ POD=`kubectl get pods -l app=mariadb-snap | grep Running | grep 1/1 | awk '{print $1}'`

$ kubectl exec -it $POD -- mysql -uroot -ppassword

Welcome to the MariaDB monitor. Commands end with ; or \g.

Your MariaDB connection id is 8

Server version: 10.4.6-MariaDB-1:10.4.6+maria~bionic mariadb.org binary distribution

Copyright (c) 2000, 2018, Oracle, MariaDB Corporation Ab and others.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

MariaDB [(none)]>

MariaDB [(none)]> USE `classicmodels`;

MariaDB [classicmodels]> select `officeCode`, `city`, `phone` from `offices` where `country` = "USA";

+------------+---------------+-----------------+

| officeCode | city | phone |

+------------+---------------+-----------------+

| 1 | San Francisco | +1 650 219 4782 |

| 2 | Boston | +1 215 837 0825 |

| 3 | NYC | +1 212 555 3000 |

+------------+---------------+-----------------+

3 rows in set (0.00 sec)

Notice that the collection is still there with the data intact. We can also push the snapshot to Amazon S3 if we want to create a Disaster Recovery backup in another region. Portworx snapshots also work with any S3 compatible object storage, so the backup can go to a different cloud or even an on-premises data center. Alternatively, we can stretch a single Portworx cluster across two independent Kubernetes clusters for Zero RPO DR for Kubernetes.

Summary

Portworx can easily be deployed on AKS to run stateful workloads in production. Through the integration of STORK, DevOps and StorageOps teams can seamlessly run highly-available database clusters in AKS. They can perform traditional operations such as volume expansion, snapshots, backup and recovery for the cloud-native applications.

Share

Subscribe for Updates

About Us

Portworx is the leader in cloud native storage for containers.

Thanks for subscribing!

Janakiram MSV

Contributor | Certified Kubernetes Administrator (CKA) and Developer (CKAD)Explore Related Content:

- azure kubernetes service

- kubernetes

- mariadb

- miscrosoft