Get hands-on with OpenShift + Portworx at your own pace Try it Now

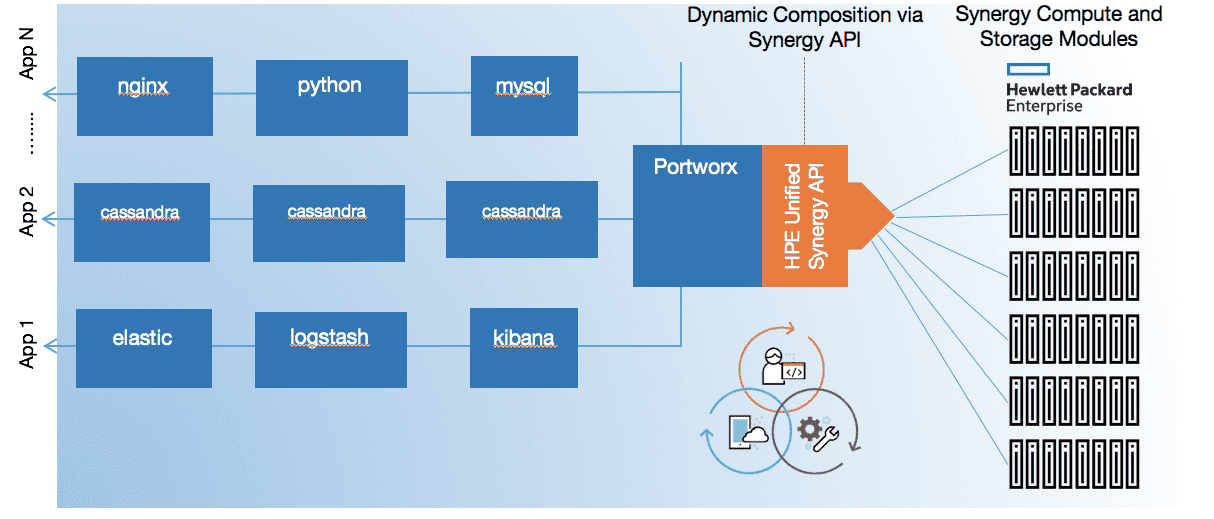

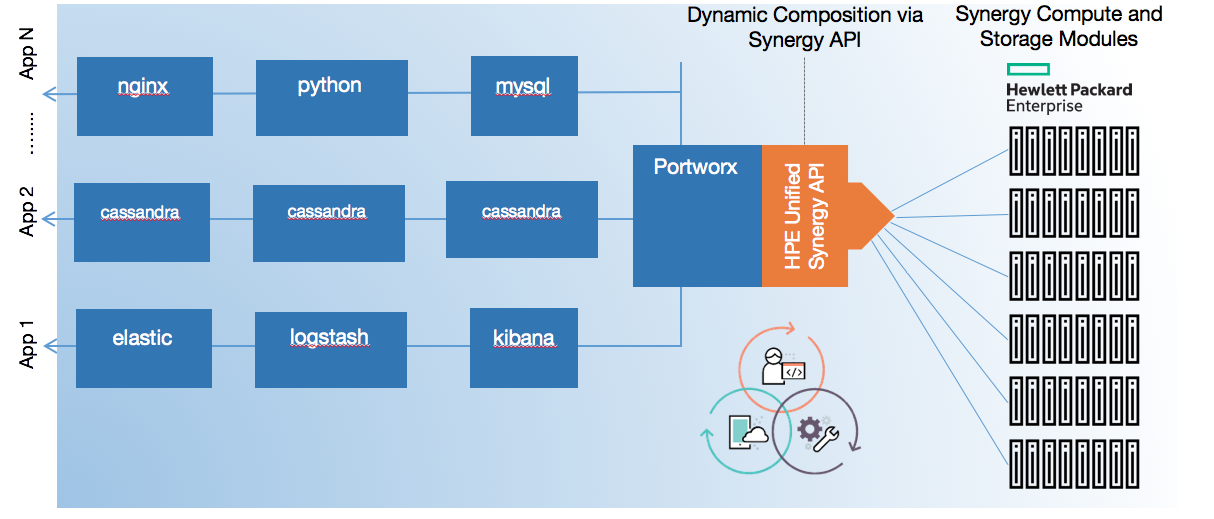

Using Portworx in conjunction with Mesosphere and HPE Synergy, enterprises can build cloud-native data centers that offer the business agility that DevOps needs.

Enterprises today are embracing DevOps-driven application deployment as they seek to improve business agility. Today’s software practices have matured in terms of how they are architected and deployed. We’ve come to embrace the term “cloud-native applications” which have these characteristics:

- They are not monolithic. Instead they are discrete, logically separable portions that are packaged and deployed on their own. Usually these are done as containers, and in some cases just as regular Linux packages.

- The entire application stack should not be required to be run on the same machine. They can be scheduled anywhere, in any server, or any zone. They should also be able to discover each other in a distributed deployment.

- The application should be able to scale with demand quickly, by spinning up parallel instances of a particular compute logic.

- Services that applications depend on for coordination of communication or for state preservation should be discoverable and CRUD-able on demand, programmatically and dynamically regardless of any underlying physical infrastructure.

Cloud-native applications require dynamic infrastructure that can be controlled programmatically. They are deployed anticipating the need react to changes in compute and storage needs. AWS was one of the pioneers in this approach by offering features such as elastic block storage, auto-scaling of compute instances, and a programmatic way of controlling infrastructure.

Portworx Composable Storage for Containers

Portworx is a cloud-native, software data management storage layer. It is designed for containers and schedulers. It provides the type of composable persistent storage that modern applications need. At its core is the Portworx distributed block storage layer. This layer is designed for providing a highly available, enterprise class SAN solution implemented in software. It has the ability to dynamically reprogram the underlying physical infrastructure based on the applications needs.

From this storage data management layer, Portworx provides container-granular data services such as a persistent filesystem, S3 interfaces, encrypted volumes, global namespaces, and more. These services are provisioned to containers in coordination with an application scheduler such as Mesosphere or Kubernetes. Furthermore, all of these services can be CRUD-operated on demand, programmatically via the orchestration software. That is, Portworx is truly behind the scenes and operates on behalf of the DevOps run time intents to provide virtual storage services, while reconfiguring the hardware underneath to meet application needs.

Portworx’s intelligent data placement algorithms protects an application’s data across racks and regions with failure domains in mind. These algorithms also allow the application to dynamically scale both storage capacity and the number of concurrent instances. All of this is done with container granularity by providing a virtual volume to the container, and not physically binding an application to an actual disk or LUN on the back-end systems.

Using Portworx, containers, and scheduling software, DevOps now has the ability to create a composable storage fabric on top of which they deploy applications in a cloud native, programmatic way – all driven through automation.

Portworx, Mesosphere, HPE Composable Infrastructure

Portworx integrates with Docker and Mesosphere to orchestrate where the applications are run, and how the storage services are provided to the containers. To do this, Portworx has data volume hooks with the Docker engine, and scheduling hooks with Mesosphere. Using Portworx and Mesosphere, end users can programmatically and dynamically create volumes without admin intervention as shown by a sample Marathon application snippet below:

"parameters": [

{

"key": "volume-driver",

"value": "pxd"

},

{

"key": "volume",

"value": "size=100G,repl=3,cos=3,name=mysql_vol:/var/lib/mysql"

}],

Prior to an application being deployed, Mesosphere and Portworx communicate to figure out the types of data services that the application needs. With this information, Portworx facilitates an appropriate node on which the application is to be run. Once the container is deployed, Portworx provides a virtual data service volume to the container. As the application consumes data, Portworx will read and write the data on the appropriate backend storage arrays. In the event of a node or software failure Portworx will work with Mesosphere to move the container to another node and re-deploy the virtual data service to ensure high availability.

In the back end, Portworx uses the HPE Unified Synergy API to dynamically configure HPE synergy based on the application’s resource consumption and deployment pattern. Portworx can allocate or de-allocate storage blocks based on the applications consumption.

Mesosphere deploys applications, and Portworx provides data services via Synergy

Portworx configures HPE Synergy to provide a converged platform to the applications. That is, the container’s data appears as if it is local to the node it is running on. To do this, Portworx instructs Synergy to provision DAS storage to the server nodes. Sitting on top of an inherently DAS infrastructure, Portworx provides an elastic scale-out block storage layer from which the virtual data services are derived.

Summary

Using Portworx in conjunction with Mesosphere and HPE Synergy, enterprises can build cloud-native data centers that offer the business agility that DevOps needs. As enterprises start rolling out more mature PaaS services to their DevOps users, they need a platform that can be dynamically composed, both in terms of software and hardware. Using technologies like Portworx to provide a composable data fabric highlights the real power of HPE Synergy.

Share

Subscribe for Updates

About Us

Portworx is the leader in cloud native storage for containers.

Thanks for subscribing!