This post is part of our ongoing series on running Microsoft SQL Server on Kubernetes. We’ve published a number of articles about running Microsoft SQL Server on Kubernetes for specific platforms and for specific use cases. If you are looking for a specific Kubernetes platform, check out these related articles.

Running HA SQL Server on Amazon Elastic Container Service for Kubernetes (EKS)

Running HA SQL Server on Google Kubernetes Engine (GKE)

Running HA SQL Server on Azure Kubernetes Service (AKS)

Running HA SQL Server with Rancher Kubernetes Engine (RKE)

Running HA SQL Server on IBM Cloud Private

And now, onto the post…

Red Hat OpenShift is a comprehensive enterprise-grade application platform built for containers powered by Kubernetes. OpenShift lets developers quickly build, develop, and deploy applications on nearly any infrastructure, public or private.

OpenShift comes in four flavors – OpenShift Origin, OpenShift Online, OpenShift Container Platform, and OpenShift Dedicated. OpenShift Origin is the upstream, open source version which can be installed on Fedora, CentOS or Red Hat Enterprise Linux. OpenShift Online is the hosted version of the platform managed by Red Hat. OpenShift Container Platform is the enterprise offering that can be deployed in the public cloud or within an enterprise data center. OpenShift Dedicated is a single-tenant, highly-available cluster running in the public cloud.

For this walk-through, we are using a cluster running OpenShift Origin.

Portworx is a cloud native storage platform to run persistent workloads deployed on a variety of orchestration engines including Kubernetes. With Portworx, customers can manage the database of their choice on any infrastructure using any container scheduler. It provides a single data management layer for all stateful services, no matter where they run.

Portworx is Red Hat certified for Red Hat OpenShift Container Platform and PX-Enterprise is available in the Red Hat Container Catalog. This certification enables enterprises to confidently run high-performance stateful applications like databases, big and fast data workloads, and machine learning applications on the Red Hat OpenShift Container Platform. Learn more about Portworx & OpenShift in our Product Brief.

This tutorial is a walk-through of the steps involved in deploying and managing a highly available Microsoft SQL Server database on Red Hat OpenShift. Portworx is a Microsoft SQL Server high availability and disaster recovery partner and this tutorial will show you how to reliably run this database for mission-critical applications.

In summary, to run HA SQL Server on Red Hat OpenShift you need to:

- Launch a Red Hat OpenShift cluster

- Install cloud native storage solution like Portworx as a DaemonSet on OKD

- Create a storage class defining your storage requirements like replication factor, snapshot policy, and performance profile

- Deploy SQL Server on OpenShift

- Test failover by killing or cordoning node in your cluster

- Expand the storage volume without downtime

- Backup and restore SQL Server from a snapshot

While not covered in this tutorial, Portworx also enables the following capabilities for MS SQL server.

- Bring-your-own key encryption of data volumes for data protection

- Zero RPO failover of MS SQL server pods between data centers in a metropolitan area (defined by maximum 15 milliseconds round trip latency)

- Near RPO zero failover for MS SQL server pods between data centers across the WAN

- Application and data backup and restore across environments with a single Kubernetes command for scheduled and unscheduled maintenance, blue-green deployments or copy data management.

How to install and configure an OpenShift Origin cluster

OpenShift Origin can be deployed in a variety of environments ranging from VirtualBox to a public cloud IaaS such as Amazon, Google, Azure. Refer to the official installation guide for the steps involved in setting up your own cluster. For this guide, we run an OpenShift Origin (OKD) cluster in AWS.

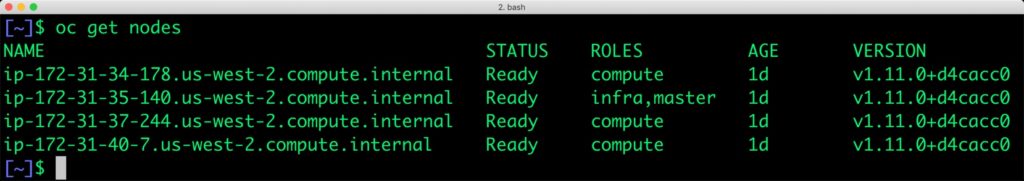

Your OpenShift cluster setup should look similar to the below configuration. It is recommended that you run at least 3 nodes for the HA configuration.

$ oc get nodes NAME STATUS ROLES AGE VERSION ip-172-31-34-178.us-west-2.compute.internal Ready compute 1d v1.11.0+d4cacc0 ip-172-31-35-140.us-west-2.compute.internal Ready infra,master 1d v1.11.0+d4cacc0 ip-172-31-37-244.us-west-2.compute.internal Ready compute 1d v1.11.0+d4cacc0 ip-172-31-40-7.us-west-2.compute.internal Ready compute 1d v1.11.0+d4cacc0

Though almost all the steps can be performed through the OpenShift Console, we are using the oc CLI. Please note that most of the kubectl commands are available through oc tool. You may find the tools used interchangeably.

Installing Portworx in OpenShift

Since OpenShift is based on Kubernetes, the steps involved in installing Portworx are not very different from the standard Kubernetes installation. Portworx documentation has a detailed guide with the prerequisites and all the steps to install on OpenShift.

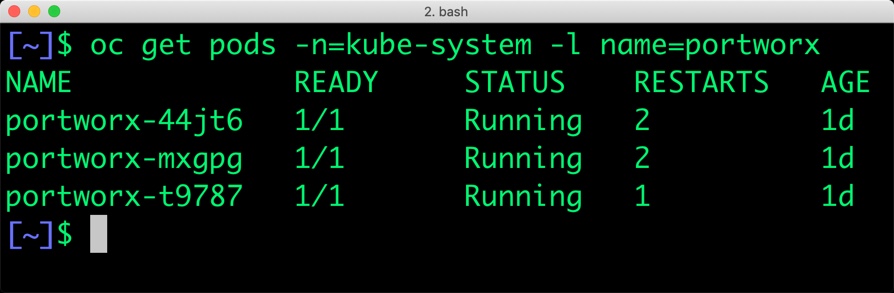

Before proceeding further, ensure that Portworx is up and running on OpenShift.

$ oc get pods -n=kube-system -l name=portworx NAME READY STATUS RESTARTS AGE portworx-44jt6 1/1 Running 2 1d portworx-mxgpg 1/1 Running 2 1d portworx-t9787 1/1 Running 1 1d

Creating a storage class for MS SQL Server

Once the OKD cluster is up and running, and Portworx is installed and configured, we will deploy a highly available Microsoft SQL Server stack in Kubernetes.

Through storage class objects, an admin can define different classes of Portworx volumes that are offered in a cluster. These classes will be used during the dynamic provisioning of volumes. The storage class defines the replication factor, I/O profile (e.g., for a database or a CMS), and priority (e.g., SSD or HDD). These parameters impact the availability and throughput of workloads and can be specified for each volume. This is important because a production database will have different requirements than a development Jenkins cluster.

$ cat > px-sql-sc.yaml << EOF

kind: StorageClass

apiVersion: storage.k8s.io/v1beta1

metadata:

name: px-mssql-sc

provisioner: kubernetes.io/portworx-volume

parameters:

repl: "3"

io_profile: "db_remote"

priority_io: "high"

allowVolumeExpansion: true

EOF

Create the storage class and verify it’s available in the default namespace.

$ oc create -f px-sql-sc.yaml storageclass.storage.k8s.io/px-mssql-sc created $ oc get sc NAME PROVISIONER AGE px-mssql-sc kubernetes.io/portworx-volume 11s stork-snapshot-sc stork-snapshot 1d

Creating an MS SQL Server PVC

We can now create a Persistent Volume Claim (PVC) based on the Storage Class. Thanks to dynamic provisioning, the claims will be created without explicitly provisioning Persistent Volume (PV).

$ cat > px-sql-pvc.yaml << EOF

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: mssql-data

annotations:

volume.beta.kubernetes.io/storage-class: px-mssql-sc

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 5Gi

EOF

$ oc create -f px-sql-pvc.yaml persistentvolumeclaim/mssql-data created

Let’s verify the PVC with the following command:

$ oc get pvc NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE mssql-data Bound pvc-31f9ff1e-69a7-11e9-9fb5-06d84be752aa 5Gi RWO px-mssql-sc 6s

Deploying MS SQL Server on OpenShift

Finally, let’s create a Microsoft SQL Server instance as a Kubernetes deployment object. For simplicity sake, we will just be deploying a single SQL Server pod. Because Portworx provides synchronous replication for High Availability, a single SQL Server instance might be the best deployment option for your SQL database. Portworx can also provide backing volumes for multi-node SQL Server deployments. The choice is yours.

cat > px-sql-db.yaml << EOF

apiVersion: apps/v1

kind: Deployment

metadata:

name: mssql

spec:

strategy:

rollingUpdate:

maxSurge: 1

maxUnavailable: 1

type: RollingUpdate

replicas: 1

selector:

matchLabels:

app: mssql

template:

metadata:

labels:

app: mssql

spec:

containers:

- name: mssql

image: microsoft/mssql-server-linux:2017-latest

imagePullPolicy: "IfNotPresent"

ports:

- containerPort: 1433

env:

- name: ACCEPT_EULA

value: "Y"

- name: SA_PASSWORD

value: "P@ssw0rd"

volumeMounts:

- mountPath: /var/opt/mssql

name: mssqldb

volumes:

- name: mssqldb

persistentVolumeClaim:

claimName: mssql-data

EOF

$ oc create -f px-sql-db.yaml deployment.extensions/mssql created

Make sure that the SQL Server pods are in the Running state.

$ oc get pods -l app=mssql -o wide --watch

Wait till the SQL Server pod reaches the Running state.

$ oc get pods -l app=mssql -o wide --watch NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE mssql-79759f8f66-f2s4g 1/1 Running 0 1m 10.129.0.4 ip-172-31-44-215.us-west-2.compute.internal

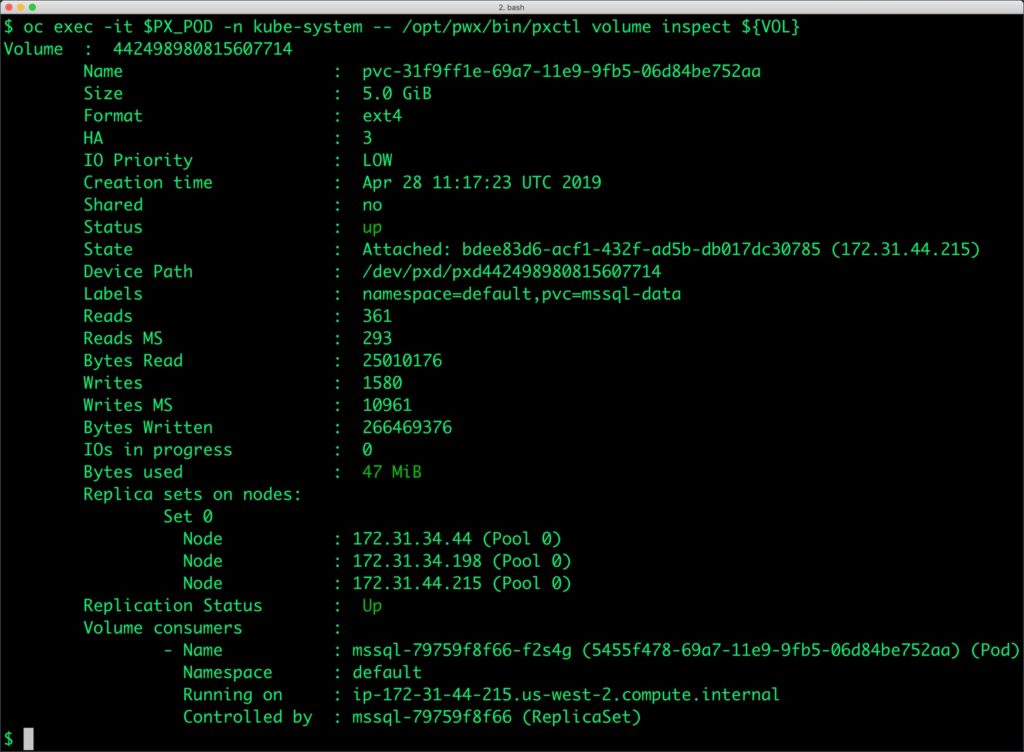

We can inspect the Portworx volume by accessing the pxctl tool running with the SQL Pod.

$ VOL=`oc get pvc | grep mssql-data | awk '{print $3}'`

$ PX_POD=$(oc get pods -l name=portworx -n kube-system -o jsonpath='{.items[0].metadata.name}')

$ oc exec -it $PX_POD -n kube-system -- /opt/pwx/bin/pxctl volume inspect ${VOL}

The output from the above command confirms the creation of volumes that are backing the SQL Server database instance.

Failing over MS SQL Server on OpenShift

Let’s populate the database with sample data.

Create a SQL file with the below statements:

CREATE DATABASE classicmodels;

go

USE classicmodels;

go

CREATE TABLE offices (

officeCode varchar(10) NOT NULL,

city varchar(50) NOT NULL,

phone varchar(50) NOT NULL,

addressLine1 varchar(50) NOT NULL,

addressLine2 varchar(50) DEFAULT NULL,

state varchar(50) DEFAULT NULL,

country varchar(50) NOT NULL,

postalCode varchar(15) NOT NULL,

territory varchar(10) NOT NULL,

);

go

insert into offices(officeCode,city,phone,addressLine1,addressLine2,state,country,postalCode,territory) values

('1','San Francisco','+1 650 219 4782','100 Market Street','Suite 300','CA','USA','94080','NA'),

('2','Boston','+1 215 837 0825','1550 Court Place','Suite 102','MA','USA','02107','NA'),

('3','NYC','+1 212 555 3000','523 East 53rd Street','apt. 5A','NY','USA','10022','NA'),

('4','Paris','+33 14 723 4404','43 Rue Jouffroy abbans',NULL,NULL,'France','75017','EMEA'),

('5','Tokyo','+81 33 224 5000','4-1 Kioicho',NULL,'Chiyoda-Ku','Japan','102-8578','Japan'),

('6','Sydney','+61 2 9264 2451','5-11 Wentworth Avenue','Floor #2',NULL,'Australia','NSW 2010','APAC'),

('7','London','+44 20 7877 2041','25 Old Broad Street','Level 7',NULL,'UK','EC2N 1HN','EMEA');

go

We will copy the sample data to the MS SQL pod before loading it through the SQLCMD utility.

$ SQL_POD=$(oc get pods -l app=mssql -o jsonpath='{.items[0].metadata.name}')

$ oc cp sample_data.sql $SQL_POD:/tmp

Let’s load the sample data into SQL Server.

$ oc exec $SQL_POD -- /opt/mssql-tools/bin/sqlcmd -U sa -P P@ssw0rd -i /tmp/sample_data.sql

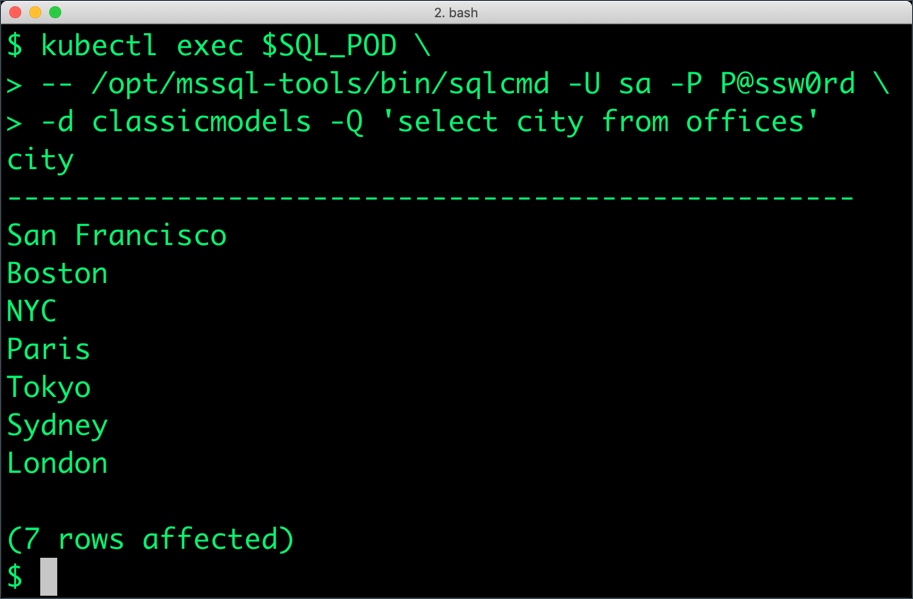

We can query the database by running the below command:

$ oc exec $SQL_POD -- /opt/mssql-tools/bin/sqlcmd -U sa -P P@ssw0rd -d classicmodels -Q 'select * from offices'

The below query shows only the cities from the table.

$ oc exec $SQL_POD \ -- /opt/mssql-tools/bin/sqlcmd -U sa -P P@ssw0rd \ -d classicmodels -Q 'select city from offices'

Now, let’s simulate the node failure by cordoning off the node on which SQL Server is running.

$ NODE=`oc get pods -l app=mssql -o wide | grep -v NAME | awk '{print $7}'`

$ oc adm cordon ${NODE}

node/ip-172-31-44-215.us-west-2.compute.internal cordoned

We will now go ahead and delete the SQL Server pod.

$ POD=`oc get pods -l app=mssql -o wide | grep -v NAME | awk '{print $1}'`

$ oc delete pod ${POD}

pod "mssql-79759f8f66-f2s4g" deleted

As soon as the pod is deleted, it is relocated to the node with the replicated data. STorage ORchestrator for Kubernetes (STORK), Portworx’s custom storage scheduler allows co-locating the pod on the exact node where the data is stored. It ensures that an appropriate node is selected for scheduling the pod.

Let’s verify this by running the below command. We will notice that a new pod has been created and scheduled in a different node.

$ oc get pods -l app=mssql NAME READY STATUS RESTARTS AGE mssql-79759f8f66-d99hg 0/1 ContainerCreating 0 18s

Wait till the pod is ready and run the select query in it.

$ SQL_POD=$(oc get pods -l app=mssql -o jsonpath='{.items[0].metadata.name}')

$ oc exec $SQL_POD \

-- /opt/mssql-tools/bin/sqlcmd -U sa -P P@ssw0rd \

-d classicmodels -Q 'select city from offices'

Observe that the database table is still there and all the content intact!

Performing Storage Operations on OpenShift

After testing end-to-end failover of the database, let’s perform StorageOps on our OKD cluster.

Expanding the Volume with no downtime

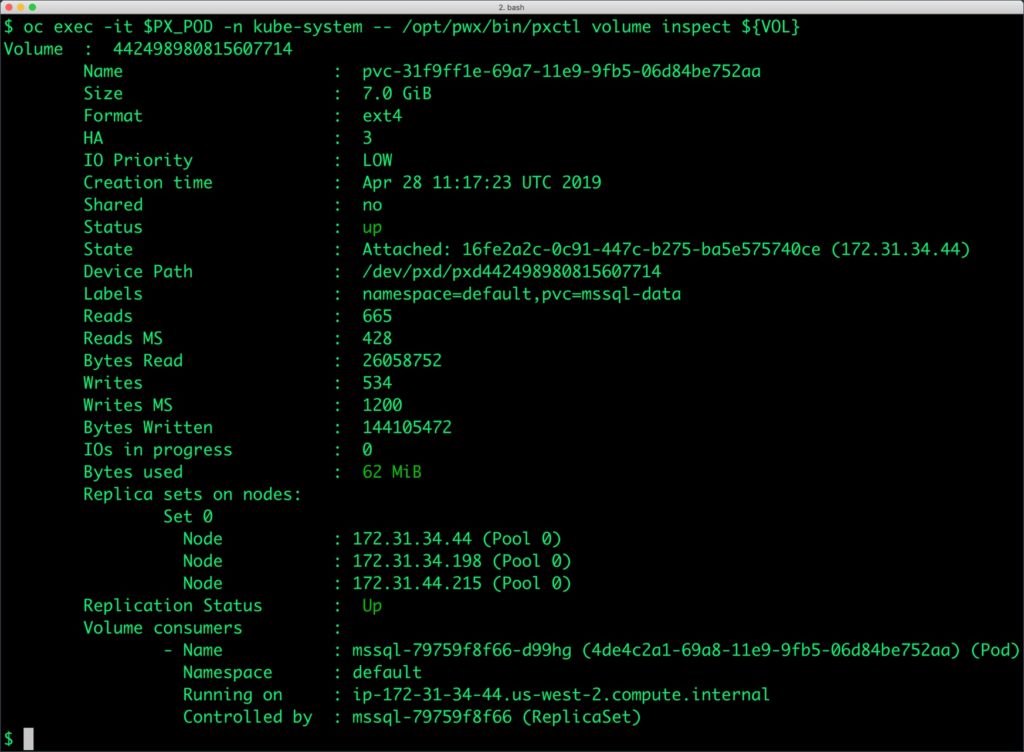

Let’s first get the Portworx volume name backing the SQL Server deployment and inspect it through the pxctl tool.

$ VOL=`oc get pvc | grep mssql-data | awk '{print $3}'`

$ PX_POD=$(oc get pods -l name=portworx -n kube-system -o jsonpath='{.items[0].metadata.name}')

$ oc exec -it $PX_POD -n kube-system -- /opt/pwx/bin/pxctl volume inspect ${VOL}

The current volume size is 5GiB as defined in the PVC specification. Let’s expand it to 7GiB using the following command.

$ oc exec -it $PX_POD -n kube-system -- /opt/pwx/bin/pxctl volume update $VOL --size=7 Update Volume: Volume update successful for volume pvc-31f9ff1e-69a7-11e9-9fb5-06d84be752aa

Backing up and restoring a SQL Server instance through snapshots

Portworx supports creating Snapshots for Kubernetes PVCs. Since there is only one SQL Server instance, we can use regular, local snapshots to backup and restore.

Let’s create a snapshot for the Kubernetes PVC we created for SQL Server.

cat > px-sql-snap.yaml << EOF apiVersion: volumesnapshot.external-storage.k8s.io/v1 kind: VolumeSnapshot metadata: name: px-sql-snapshot namespace: default spec: persistentVolumeClaimName: mssql-data EOF

$ oc create -f px-sql-snap.yaml volumesnapshot.volumesnapshot.external-storage.k8s.io/px-sql-snapshot created

Verify the creation of the volume snapshot.

$ oc get volumesnapshot NAME AGE px-sql-snapshot 25s

$ oc get volumesnapshotdatas NAME AGE k8s-volume-snapshot-251628c0-6406-11e9-85ba-0a580a340105 33s

We can now create a new PVC from the snapshot.

$ cat > px-sql-snap-pvc.yaml << EOF

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: px-sql-snap-clone

annotations:

snapshot.alpha.kubernetes.io/snapshot: px-sql-snapshot

spec:

accessModes:

- ReadWriteOnce

storageClassName: stork-snapshot-sc

resources:

requests:

storage: 5Gi

EOF

$ oc create -f px-sql-snap-pvc.yaml persistentvolumeclaim/px-sql-snap-clone created

A new SQL Server pod based on the new PVC restored from the snapshot will contain the data from the original volume.

$ cat > px-sql-db-clone.yaml << EOF

apiVersion: apps/v1

kind: Deployment

metadata:

name: mssql-snap

spec:

strategy:

rollingUpdate:

maxSurge: 1

maxUnavailable: 1

type: RollingUpdate

replicas: 1

selector:

matchLabels:

app: mssql-snap

template:

metadata:

labels:

app: mssql-snap

spec:

containers:

- name: mssql

image: microsoft/mssql-server-linux:2017-latest

imagePullPolicy: "IfNotPresent"

ports:

- containerPort: 1433

env:

- name: ACCEPT_EULA

value: "Y"

- name: SA_PASSWORD

value: "P@ssw0rd"

volumeMounts:

- mountPath: /var/opt/mssql

name: mssqldb

volumes:

- name: mssqldb

persistentVolumeClaim:

claimName: px-sql-snap-clone

EOF

$ oc create -f px-sql-db-clone.yaml deployment.extensions/mssql-snap created

Querying the new SQL Server pod will show the same data as the original.

$ SQL_POD=$(oc get pods -l app=mssql-snap -o jsonpath='{.items[1].metadata.name}')

$ oc exec $SQL_POD \

> -- /opt/mssql-tools/bin/sqlcmd -U sa -P P@ssw0rd \

> -d classicmodels -Q 'select city from offices'

city

--------------------------------------------------

San Francisco

Boston

NYC

Paris

Tokyo

Sydney

London

(7 rows affected)

Summary

Portworx can easily be deployed on Red Hat OpenShift to run stateful workloads in production. It integrates well with Kubernetes and OpenShift StatefulSets by providing dynamic provisioning. Additional operations such as expanding the volumes and performing backups stored as snapshots on object storage can be performed while managing production workloads.

Share

Subscribe for Updates

About Us

Portworx is the leader in cloud native storage for containers.

Thanks for subscribing!

Janakiram MSV

Contributor | Certified Kubernetes Administrator (CKA) and Developer (CKAD)Explore Related Content:

- databases

- kubernetes

- openshift

- red hat

- red hat OpenShift

- SQL Server