Kubernetes vs Docker: What’s the Difference?

Containerization has revolutionized how organizations build, package, and deploy applications in heterogeneous environments. Docker and Kubernetes are two popular technologies in the container and cloud-native ecosystem: Docker provides container runtime and image management capabilities for individual hosts, while Kubernetes offers advanced orchestration designed for complex, distributed systems. Both serve production environments, with Docker optimized for lightweight, single-host container deployments and Kubernetes for extensive cluster management.

In this article, we will learn about Docker and Kubernetes, covering the core architectural differences, performance and scalability considerations, and deployment approaches. We will also understand Docker persistent storage solutions, operational management strategies, and their benefits and use cases.

Understanding Docker

What is Docker?

Docker is an open-source containerized platform for quickly developing, deploying, and managing applications. It packages software into standardized containers, which are lightweight, executable, and standalone packages containing everything – including code, runtime, system tools, and libraries – to make the application run reliably in any environment. Docker significantly reduces environment-specific issues by providing a consistent runtime that minimizes the ‘it works on my machine’ problem when moving from development to production.”

Key Components of Docker

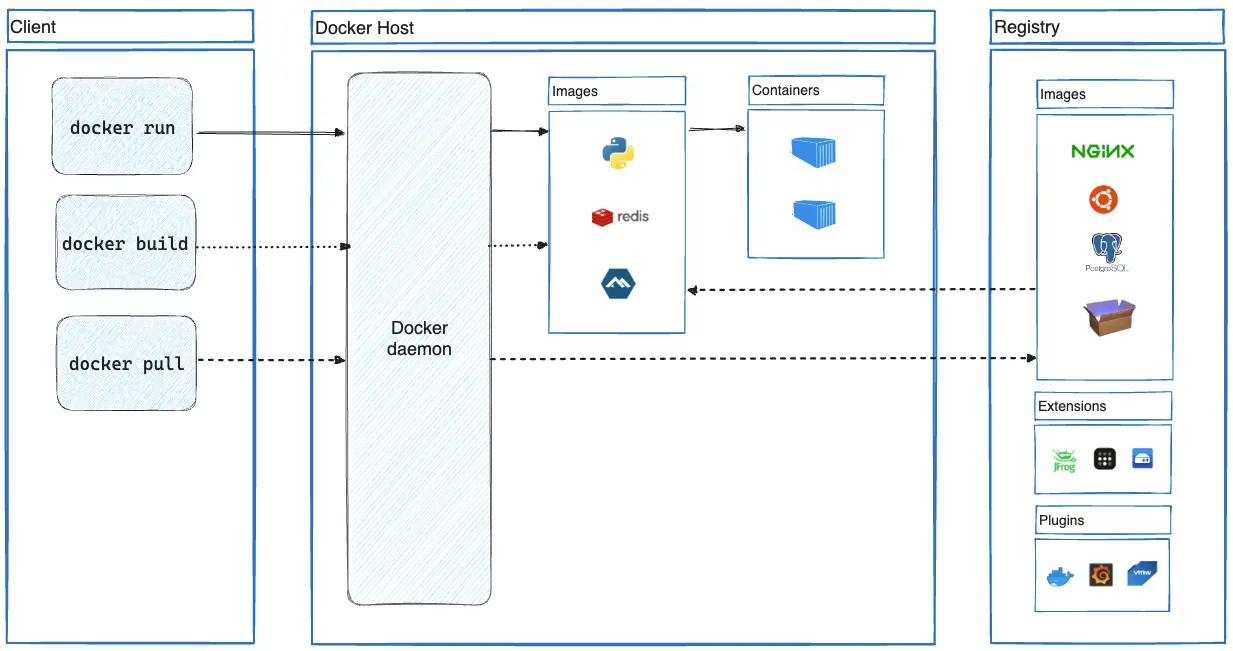

Docker uses a client-server architecture with several components interacting with the daemon to create, run, and distribute the containers. The Docker system consists mainly of the following components that help streamline container management:

- Docker Engine: this is the core runtime, which further consists of the following:

- Docker Daemon: The backend process responsible for managing containers, images, networks, and volumes.

- Docker CLI: A command-line interface to communicate with the Docker Daemon.

- REST API: Programmatic way of accessing the Docker Daemon.

- Docker Client: The Docker client is the main interface through which users interact with Docker. It establishes communication with the Docker Daemon using a REST API over UNIX sockets or network interfaces.

- Docker Images: Immutable templates defining applications and dependencies built from Dockerfiles containing instructions.

- Docker Registry: Repository for storing and distributing images. Docker Hub is the default public registry, though private registries are common in enterprise setups.

- Docker Containers: Runtime instances of images that execute applications in isolated environments.

- Docker Compose: YAML-based configuration tool for defining and orchestrating multi-container applications.

Docker components. Image courtesy: https://docs.docker.com/get-started/docker-overview/

Advantages of Using Docker

Docker containers offer several benefits that make container management efficient:

- Portability: A container consists of the application and all its dependencies, enabling seamless movement between environments and cloud providers.

- Version Control and Rollbacks: Docker allows you to version the containers and roll back to the previous version if required. By tagging and managing different versions of the Docker images, you can easily revert to a previously working state in case of issues or rollbacks.

- Scalability: Using Docker Swarm, you can easily scale the application horizontally by adding or removing containers based on demand.

- Faster Deployment: Lightweight containers have lower cold start times, accelerating development cycles and deployment pipelines.

Common Use Cases for Docker

Docker has become increasingly popular across multiple businesses due to its versatility and flexibility. Below are some common uses where Docker excels and empowers users to streamline their workflows and improve efficiency:

- Standardized Development Environments: Docker enables teams to package complex development tools (compilers, debuggers, CLI utilities) as containerized images with standardized configurations. This eliminates version conflicts between tools, simplifies environment setup with single commands, and ensures all developers use identical tooling regardless of their local system.

- Microservices Architecture: Docker plays a key role in developing and deploying microservices-based services. It allows each microservice to be executed in its own isolated environment, making it simpler to manage, update, and scale separately.

- CI/CD Pipelines: Docker integrates seamlessly into CI/CD workflows, enabling automated and streamlined software delivery. You can build, test, and deploy application components consistently across different environments by containerizing application components.

- DevOps and Infrastructure as Code (IaC): Docker containers integrate with automation tools, enabling infrastructure definition as code for consistent, repeatable environment provisioning.

Understanding Kubernetes

What is Kubernetes?

Kubernetes is an open-source container orchestration platform that automates containerized applications’ deployment, scaling, and management. It abstracts away infrastructure complexity, allowing developers to focus on application logic while ensuring workloads run reliably across distributed environments.

Kubernetes excels at managing microservices architectures through declarative configuration, handling the complexities of container scheduling, networking, and lifecycle management through its control plane components.

Core Components of Kubernetes

Kubernetes follows a control plane and worker node architecture consisting of key components that work together to manage and orchestrate containerized workloads. Its core components include:

- Control Plane Components:

- API Server: Centralized communication hub exposing RESTful API endpoints for cluster interaction.

- etcd: A distributed key-value store that maintains the cluster state and configuration.

- Scheduler: It schedules pods to worker nodes based on resource requirements and constraints.

- Controller Manager: Handles controllers like Node, ReplicaSet, Endpoint, Job, and Service Account to keep the system in the desired state.

- Worker Node Components:

- Kubelet: The node agent ensures containers run within pods as the control plane defines.

- Kube-proxy: Implements network rules for pod communication and service exposure.

- Container Runtime: Software responsible for running containers, such as containerd, CRI-O, and formerly Docker Engine directly.

Kubernetes components. Image source: https://kubernetes.io/docs/concepts/overview/components/

Key Kubernetes Objects

Apart from the control plane and worker node components, Kubernetes organizes workloads through several abstraction layers:

- Pods: The smallest deployable unit containing one or more containers sharing network and storage context.

- Nodes: An individual machine in a Kubernetes cluster that runs container workloads.

- Clusters: A group of nodes managed by Kubernetes, which work together to run containerized applications. Read more about what is a Kubernetes cluster.

- Services: Networking abstraction providing consistent access to pods through:

- ClusterIP: Internal-only access

- NodePort: External access via node IP and port

- LoadBalancer: External access through cloud provider load balancers

- Deployments: Declarative updates for Pods and ReplicaSets, enabling automated rollouts and rollbacks.

- Persistent Volumes: Storage resources abstracted from the underlying infrastructure. Refer to the Kubernetes storage guide to understand how storage works in Kubernetes.

- ConfigMaps: Abstraction to configure and manage sensitive data separated from the application code.

- Namespaces: Virtual cluster partitions enabling multi-tenancy and resource isolation

Advantages of Using Kubernetes

Kubernetes provides significant benefits when managing containers at scale.

- High Availability: Kubernetes ensures application availability during node crashes, pod failures, or network issues through higher-level constructs like DeploymentSets and ReplicaSets, which provide pod rescheduling, health monitoring, and automated recovery.

- Hybrid and Multi-Cloud Support: Like Docker, Kubernetes enables application deployment across multiple platforms, such as on-premises, private, and public cloud environments, through standardized APIs.

- Scalability: Horizontal pod autoscaling and cluster autoscaling adjust resources based on demand metrics. HPA automatically scales pod replicas based on CPU utilization, memory metrics, or custom metrics. While the cluster Autoscaler interacts with cloud providers to expand/contract node pools when pods are pending due to insufficient cluster capacity.

- Security: Multi-layered security through role-based access control (RBAC), network policies, Pod Security Standards, and secrets management. RBAC enforces fine-grained access control through roles and bindings, while network policies provide traffic filtering between pods.

Common Use Cases for Kubernetes

As the adoption of Kubernetes increases, so does the complexity of managing workloads. Below are a few use cases of Kubernetes:

- Multi-tenancy: Kubernetes allows for secured resource sharing through namespaces, network policies, and resource quotas for basic tenant isolation in enterprise environments.

- Data-Intensive Applications & AI Workloads: Kubernetes orchestrates stateful applications through StatefulSets and persistent storage. Its self-healing capabilities and GPU resource management make it ideal for AI/ML workloads, maximizing GPU utilization at scale while providing fault tolerance for long-running training jobs.

- CI/CD for microservices: Kubernetes supports automated deployments using CI/CD and GitOps tools. To minimize downtime, teams can deploy microservices using canary and blue-green deployments on Kubernetes.

- Edge Computing Deployments: Specialized Kubernetes distributions can be used to enable container orchestration on resource-constrained edge devices. Organizations deploy lightweight Kubernetes at the edge for IoT data processing, content delivery, and latency-sensitive applications with centralized management.

Comparing Kubernetes and Docker

Key Differences between Kubernetes and Docker

We understood the Docker and Kubernetes architecture and components. Here’s how they compare:

| Feature | Kubernetes | Docker |

| Orchestration | Advanced orchestration with auto-scaling, rollbacks, and self-healing. | Basic container lifecycle management with version control and rollback capabilities. |

| Complexity | Multi-component setup (API server, etcd, CNI plugins, etc.). Easier via managed services as they abstract infrastructure. | Simple installation and configuration, focused on container runtime rather than orchestration complexity. |

| Resource Management | Granular controls through requests, limits, quotas, and QoS classes | Basic resource constraints through Docker run flags. |

| Scalability | Auto-scales with HPA, VPA, and Cluster Autoscaler. | Manual scaling via docker service scale. |

| Flexibility | Ideal for multi-node, multi-cloud, and distributed workloads. | Best for single-host or lightweight clusters. |

| Networking | Uses CNI plugins for networking and DNS-based service discovery. | Basic container networking with bridge, host, and overlay network drivers. |

Similarities

Though Kubernetes and Docker have different architectures, they both have quite a few things in common, some of which are stated below in the table:

| Feature | Kubernetes | Docker |

| Container Support | Uses containerd, CRI-O to manage containers within Pods. | Uses Docker Engine for container lifecycle management. |

| Storage Management | Persistent Volumes, StorageClasses, and CSI drivers | Volume plugins, bind mounts, and tmpfs volumes |

| Declarative Configuration | Defines resources via YAML with extensibility through CRDs. | Docker Compose YAML for local multi-container orchestration |

| Microservices Support | Advanced orchestration for microservices with scaling and service discovery via services. | Container networking, DNS resolution, and overlay networks for service connectivity |

| CI/CD Integration | Integrates with CI/CD tools for automated deployments. | Supports CI/CD for building and testing container images. |

Use Case Scenarios: When to Use Kubernetes vs Docker

While Docker provides container runtime and tooling and Kubernetes offers orchestration capabilities, they serve different operational needs. Docker is ideal in scenarios demanding quick setup and consistent environments. It’s perfect for developers or small teams needing to package applications for testing and deployment without the overhead of complex orchestration.

Docker is a good choice for lightweight deployments, local development, and CI/CD pipelines. Docker Swarm provides basic multi-node clustering for simpler production workloads.

Kubernetes is the go-to solution for complex, large-scale containerized applications requiring sophisticated orchestration. Through its comprehensive control plane, it excels at managing microservices architectures, offering declarative configuration, stateful application support, Kubernetes storage management and orchestration, and advanced networking policies.

Enterprises rely on Kubernetes for multi-tenant environments, resource-efficient scaling, high availability configurations, and seamless multi-cloud deployments. Kubernetes handles the complex infrastructure concerns, letting developers focus on application development rather than infrastructure management.

Overview of Kubernetes and Docker Deployment Models

Kubernetes is a distributed orchestration system with a control plane consisting of multiple components like API server, scheduler, controller manager, and etcd, as core components. Deployments are defined declaratively as YAML manifests describing the desired state, while the control plane works to maintain the state using reconciliation loops. This enables Kubernetes to handle multiple nodes and distributed workloads with a built-in failover mechanism.

Docker, on the other hand, provides a simpler deployment model focused on individual containers. Containers run in the Docker engine on a single host and are managed via Docker CLI or Docker Compose for multi-cluster applications. Container configurations are defined as docker files and compose files, while the runtime is handled by the dockerd daemon, which interacts directly with containerd and the OCI runtime.

When comparing the deployment models of Kubernetes and Docker, Docker excels in developer environments with its simpler implementation. At the same time, Kubernetes delivers the enterprise-grade orchestration capabilities required to handle production-scale deployments.

Performance and Scalability

Production environments need careful tuning and planning to stay reliable under changing loads. In this section, we look at various considerations that highlight how Docker and Kubernetes address the challenges using different approaches.

Docker Performance Considerations:

- Granular resource control: Docker’s cgroup implementation offers basic options to tweak CPU and memory requirements through command-line flags, while Kubernetes builds upon these capabilities with additional abstractions like QoS, resource quotas, etc. for advanced workload management. This mandates careful monitoring of noisy neighbor effects, especially when containers compete for I/O resources on shared infrastructure.

- Storage driver overhead: OverlayFS is the preferred storage driver for Docker due to the lower overhead compared to older devicemapper. Depending on the I/O workloads, performance can vary 5-10% which necessitates the need for more optimized storage configuration options (more inodes, metadata pruning etc.)

Kubernetes Performance Considerations:

- Control plane bottlenecks: When you’re running a very large application, the API server and etcd performance can degrade. Scaling solutions like horizontal sharding, request throttling, and SSD-backed etcd storage can be used to maintain acceptable latency.

- Pod scheduling efficiency: At such scales, the default scheduler becomes CPU intensive, but newer framework-based scheduling extensions like Topology Aware Scheduling or PodTopologySpreadConstrainsts improve bin-packing efficiency.

Scalability Opportunities in Kubernetes

- Horizontal Pod Autoscalar (HPA): You can use custom metrics adapters like Prometheus to enable scaling based on application-specific indicators beyond CPU/memory.

- Node auto-provisioning: Cluster autoscaler with multiple node groups dynamically scales infrastructure based on pod resource needs. PodDisruptionBudgets (PDBs) help maintain application SLOs during rescaling.

Security and Management in Kubernetes vs Docker

Kubernetes implements robust security with RBAC, network policies, admission controllers, and Pod Security Standards. For managing secrets, it can integrate with external vaults like HashiCorp, AWS KMS, etc., while supporting mTLS communication via service mesh implementations. The control plane validates all operations against security contexts and runtimes through integrations like Open Policy Agent (OPA)

Docker’s security model relies on Linux capabilities, seccomp profiles, and SELinux for container isolation. The Docker daemon runs with elevated privileges, creating potential attack vectors without proper socket protection. Volume mounts and image scanning provide basic data protection but lack centralized policy enforcement.

Kubernetes offers GitOps workflows using tools like Argo or Flux with declarative configuration and drift detection. On the other hand, Docker environments require external tooling like Portainer, Docker Compose to maintain the desired state across environments.

Key Takeaways

Docker provides a simpler container runtime and image management system, with Docker Swarm offering basic orchestration for smaller deployments. Kubernetes delivers enterprise-grade orchestration necessary for complex workloads requiring high availability and scalability. You can consider Docker to be a good fit for dev/test scenarios while Kubernetes for mission-critical applications that require more sophisticated functionalities like service discovery, load balancing, and self-healing.

You can refer to the official Kubernetes and Docker documentation for comprehensive implementation guidance.