Traditional data center systems and software are not built for the size and complexity of cloud-scale, micro-services architectures. Such systems and software are harder to configure, provision, and manage as the number of nodes increase to thousands. Those systems are impossible to monitor and service at scale. Containers are changing all this and Portworx enables containers to be used even for mission-critical data services like databases.

Performance of mission critical data services is a primary concern of many engineering teams, so Portworx has collaborated with Intel to provide a reference architecture that will provide blazing fast performance of stateful services running in containers.

Portworx-Intel Reference Architecture

The Portworx-Intel reference architecture defines how to deploy containerized applications while enabling development and operations teams to reap all the benefits of containerization. The intended audience is cloud and data center architects who are moving to or already familiar with Linux containers.

The whitepaper also illustrates how DevOps teams can deploy Portworx as the native container persistence solution on servers from any vendor running Intel x86 processors, take advantage of the Portworx scheduler integrations, and build the most efficient cloud datacenters and applications.

Finally, the paper also outlines how data center and cloud architects can build large scale container and microservices infrastructure by using Intel technology and the Portworx powerful, container-native data services software, PX-Enterprise.

Portworx PX-Enterprise Performance Test

To demonstrate the performance of Portworx PX-Enterprise in representative cloud and on-premises configurations that are based on Intel technologies, we ran the following categories of performance evaluation tests:

- Storage benchmarks to demonstrate the raw system performance of PX-Enterprise devices.

- Application performance suites to demonstrate performance of real-world applications running on a PX-Enterprise

storage platform.

Test Topology and Configuration

The Portworx PX-Enterprise test set up is a five-node cluster with an external key-value store (etcd). The cluster storage

comprises a homogenous distribution of rotational and solid disk drives on each node. Each node has a dual-port Intel

x520 10 Gigabit Ethernet Network Adapter. All nodes are co-located in a single facility.

PX Configuration Px-Enterprise v1.2.8

- CPU: 2x Intel Xeon processors E5-2650 v3 (25M Cache, 2.30 GHz)

- Memory: 4x 8GB 2133MHz PC4-17000 ECC RDIMM

- Storage pool0: 1 x 120GB Intel® SSDs DC S3500 series SAS 6Gb/s 2.5″ SSD

- Storage pool1: 8 x 500GB SATA 6Gb/s 7200 RPM 2.5″ hard drive

Test Metrics

Here is a brief overview of all the test metrics.

- IO: A single input/output operation.

- Block Size: The size used for each IO operation. The test uses a block size of 64k.

- Dataset Size: The overall size of the dataset used during the test. The test uses a dataset size of 100G.

- IO Depth: The number of outstanding/queued IO requests in the system. The test uses an IO depth of 128.

- IO type: The type of IO generation used by the test tool. The test uses asynchronous, non-buffered IO.

- Throughput: The number of IO operations or transactions per second, times the IO block size.

- Latency: The completion time per IO operation.

Test Methodology

Each test runs as a set of four distinct steps:

- Setup

- Execution

- Verification

- Reporting

The write test performs an appending write (hole fill) of 100 GB. To make sure that data is stable on disk before the test

reports completion, fio runs with end_fsync=1. The test also makes sure that there is no dirty data in the cache before the

test. No verify options are used in fio, to avoid introducing any reads into the test. For reads, each test is preceded by a manual data population step using a sequential write of size 100 GB. Before the test, the cache is purged to make sure there are no cached reads. Block size for read is same as the one used at data population step.

In addition to the fio stats /proc/diskstats/ is capture before and after each test to verify that all generated IOs have hit the storage or are coming from storage. Each test is run on a fresh installation, to avoid introducing any variability across runs. Each test is repeated three times, and the results are averaged for the purpose of reporting. A ramp time of 10 seconds is used for each test to allow the tests to stabilize before measurement starts.

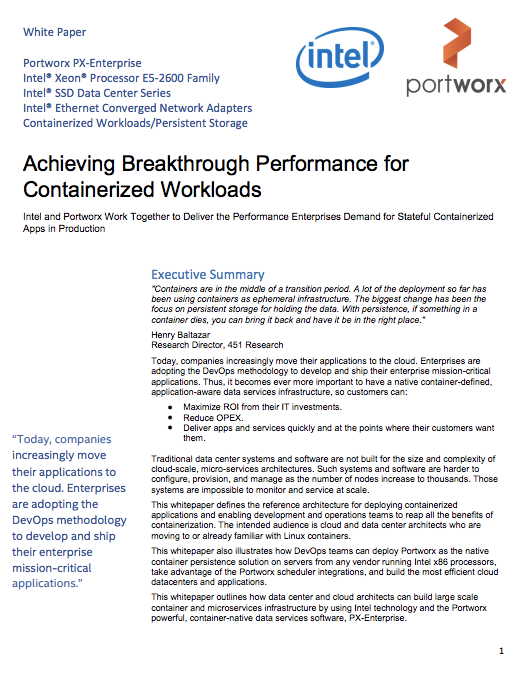

Sequential Write Performance

The following chart shows the sequential write performance for the Intel Xeon Processor E5-2699 v3-based platform.

For bandwidth-intensive workloads, as shown above, Portworx PX-Enterprise performance scales with the increase in IO block size. When doing local IOs only, PX-Enterprise uses the cumulative bandwidth of all available drive spindles. With replication turned on, PX-Enterprise performs very close to local write, even with synchronous replication for every IO operation. The replication overhead is less than 25% and stays uniform across the various block sizes.

PX-Enterprise leverages the NVMe drive available on the Intel server platform as a logging device to provide strict consistency guarantee across the cluster. That feature enables containerized application workloads with high bandwidth, strict consistency, and availability requirements to run on and leverage the power of Intel Xeon E5 V4 architecture to the fullest. Using the NVMe drive as a logging device also helps such workloads offload these guarantees from the application to the storage. Portworx PX-Enterprise enables containerized applications to gain the maximum throughput from their infrastructure for operations such as log writes, log updates, and multi-phase writes and commits.

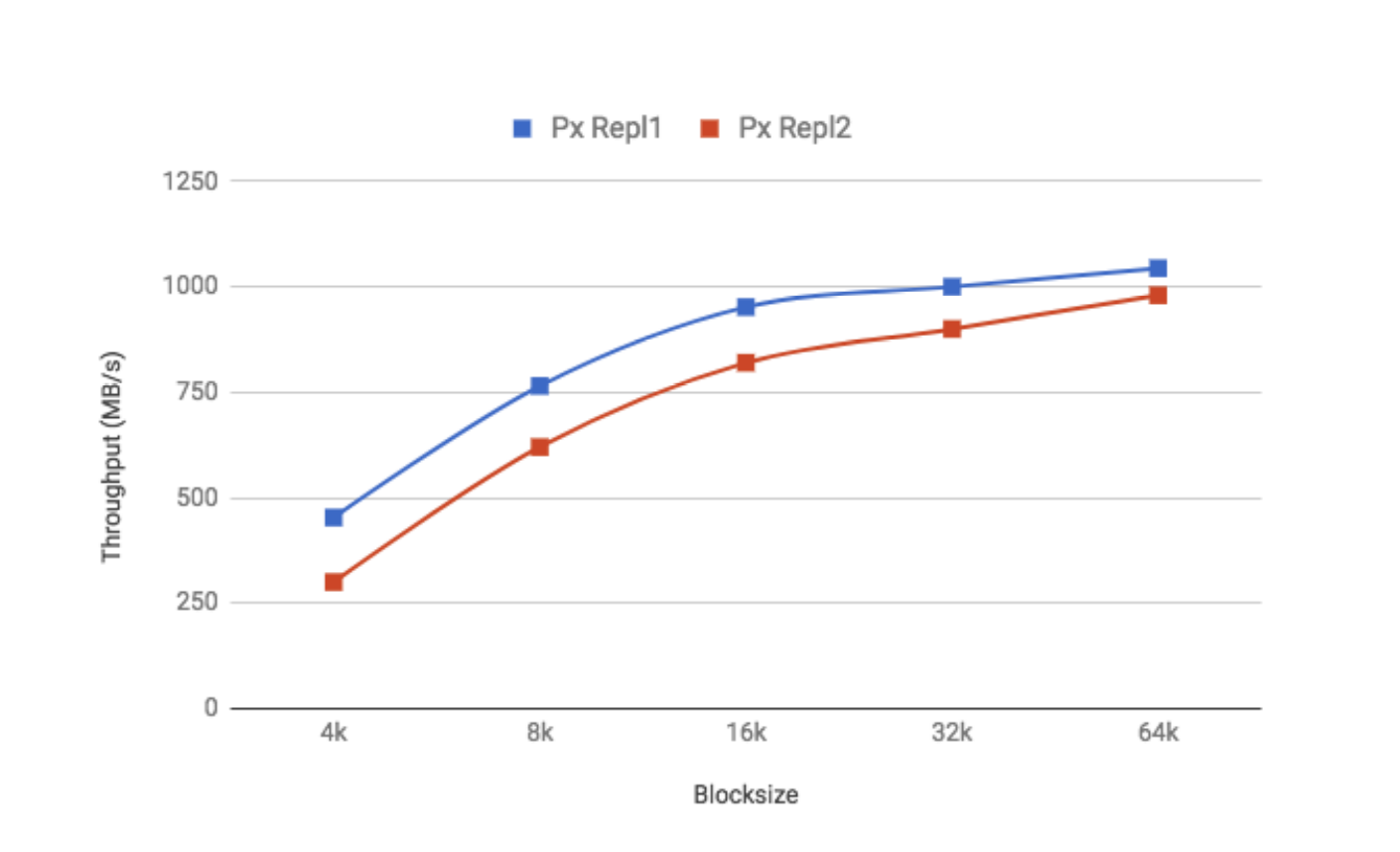

Sequential Read Performance

The following chart shows the sequential read performance for the Intel Xeon Processor E5-2699 v3-based platform.

Sequential reads also use the aggregated bandwidth of all available drive spindles. Data is spread across all available drives on all available replicas. As shown in the preceding chart, performance scales with IO blocksizes and has a minimal overhead (<20%), even when performing non-local reads.

PX-Enterprise enables bandwidth-intensive applications, such as Kafka, log replays, and other rich media workflow including media transcoding and content delivery. Combined with the Intel high throughput I/O and memory architecture and the Portworx streaming optimized I/O engine for containerized workloads, PX-Enterprise delivers high throughput to the containerized applications.

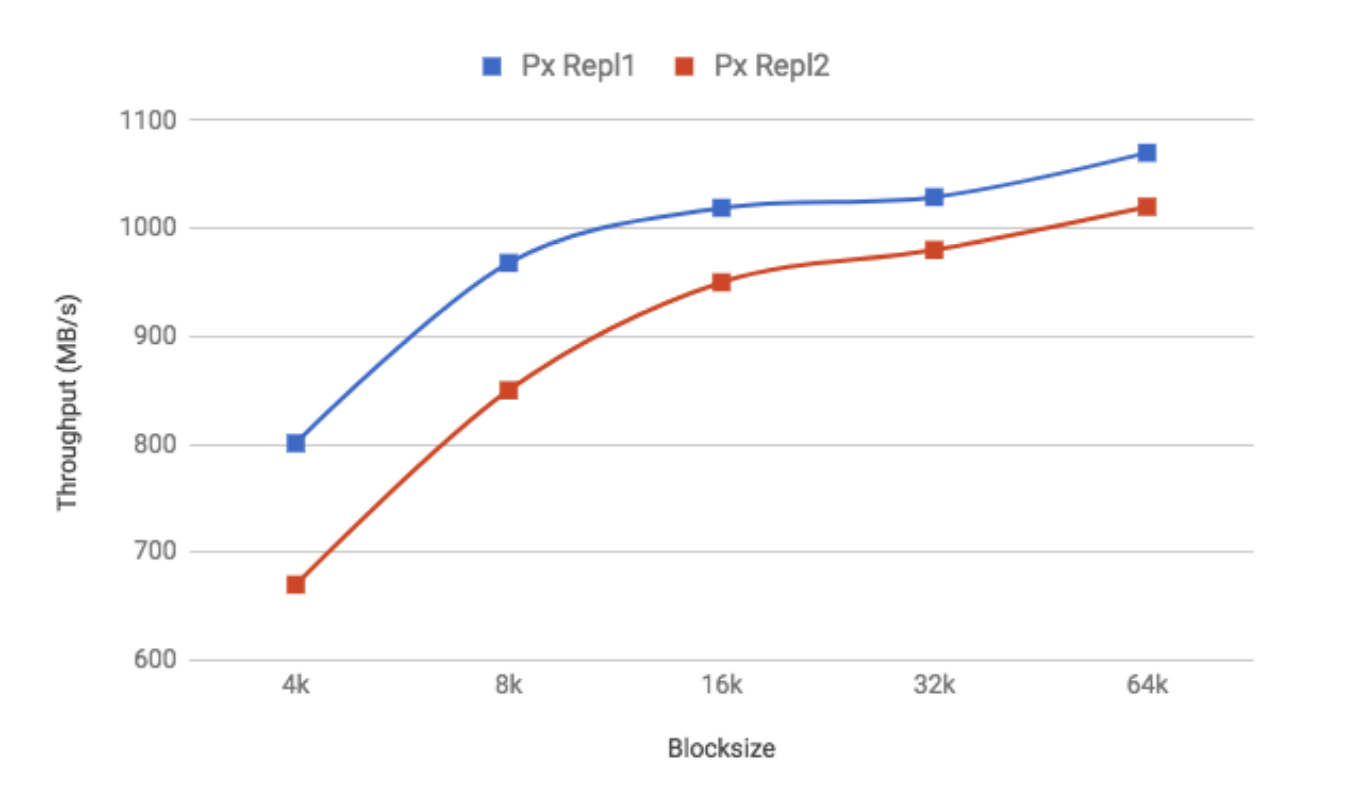

MySQL Performance with Replication

For this test we use SysBench OLTP application performance benchmark. It runs as a two-step process. The first step is dataset population, which creates the tables in the database as per the provided configuration. The second step is load generation and measurement, which generates IO to the backing storage with approximately 75% reads and 25% writes.

Test Metrics

Here is a brief overview of all the test metrics.

- Test: The test profile to run, which is OLTP in this case.

- Db-driver: The database driver, which is MySQL in this case.

- Mysql-table-engine: The database engine. The test uses the default mysql engine, MyISAM.

- Oltp-table-size: The number of rows in the table. The test uses 10 million rows.

- Oltp-read-only: Read-only or read-write test. The test uses OLTP read-write mode.

- Num-threads: The number of threads. The test uses a variable number of threads, from 1 – 256.

As shown in the following chart, PX- Enterprise performance with two-node replication (PXD-repl2) is identical in

performance to PX-Enterprise without replication (PXD). This result demonstrates that customers can leverage the

Portworx PX replication factor, and letting Portworx PX manage the high availability access to their data with very low

performance penalties.

Combining Portworx PX-Enterprise with Intel Xeon processor-based platforms, NVMe technology, and the Intel Data Center Ethernet technology enables customers to deliver unprecedented throughput and lowest latencies for containerized workloads.