Get hands-on with OpenShift + Portworx at your own pace Try it Free

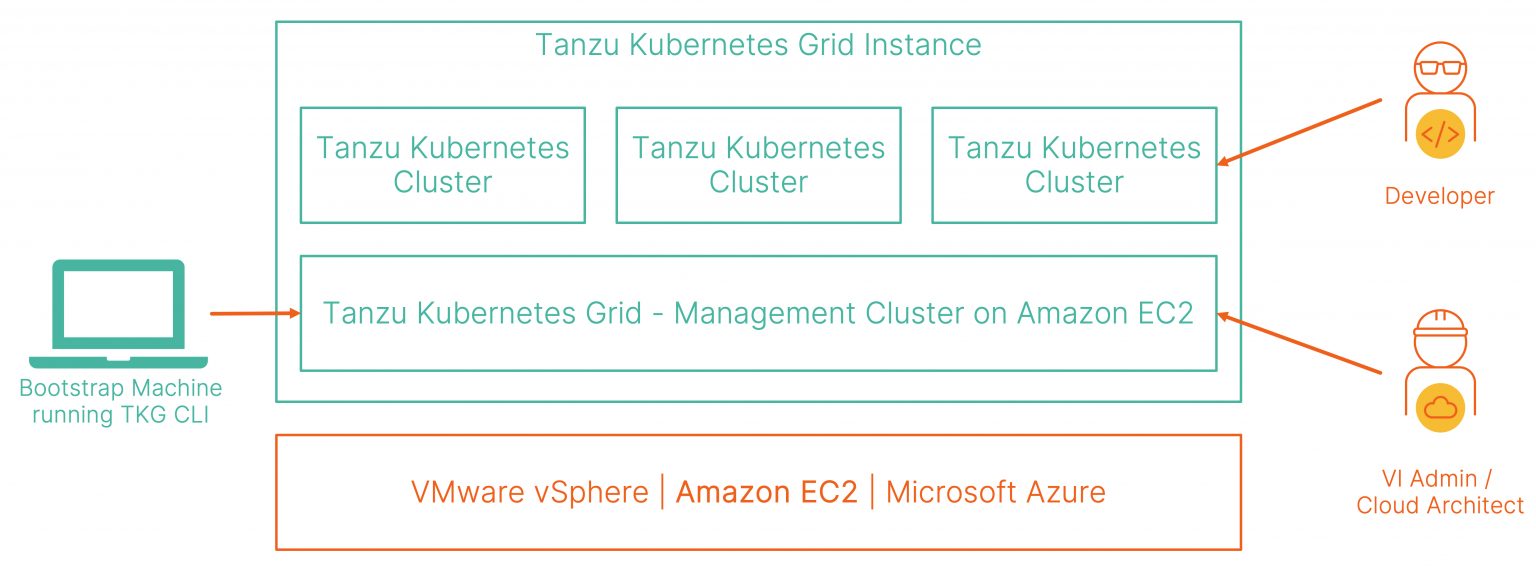

VMware Tanzu Kubernetes Grid (TKG) provides organizations with a consistent, upstream-compatible Kubernetes distribution that can be deployed across on-prem software-defined data centers (SDDC) or can be deployed on Amazon EC2 or Microsoft Azure. TKG delivers a consistent Kubernetes experience that is tested, signed, and supported by VMware. As a VI Admin or Operator, you can deploy the TKG management cluster on the infrastructure of your choice (vSphere, Amazon EC2, or Azure) and deliver a Kubernetes-as-a-service experience for your developers. The management cluster performs the role of the primary management and operational cluster for the Tanzu Kubernetes Grid instance and allows developers to deploy additional Tanzu Kubernetes clusters for their applications.

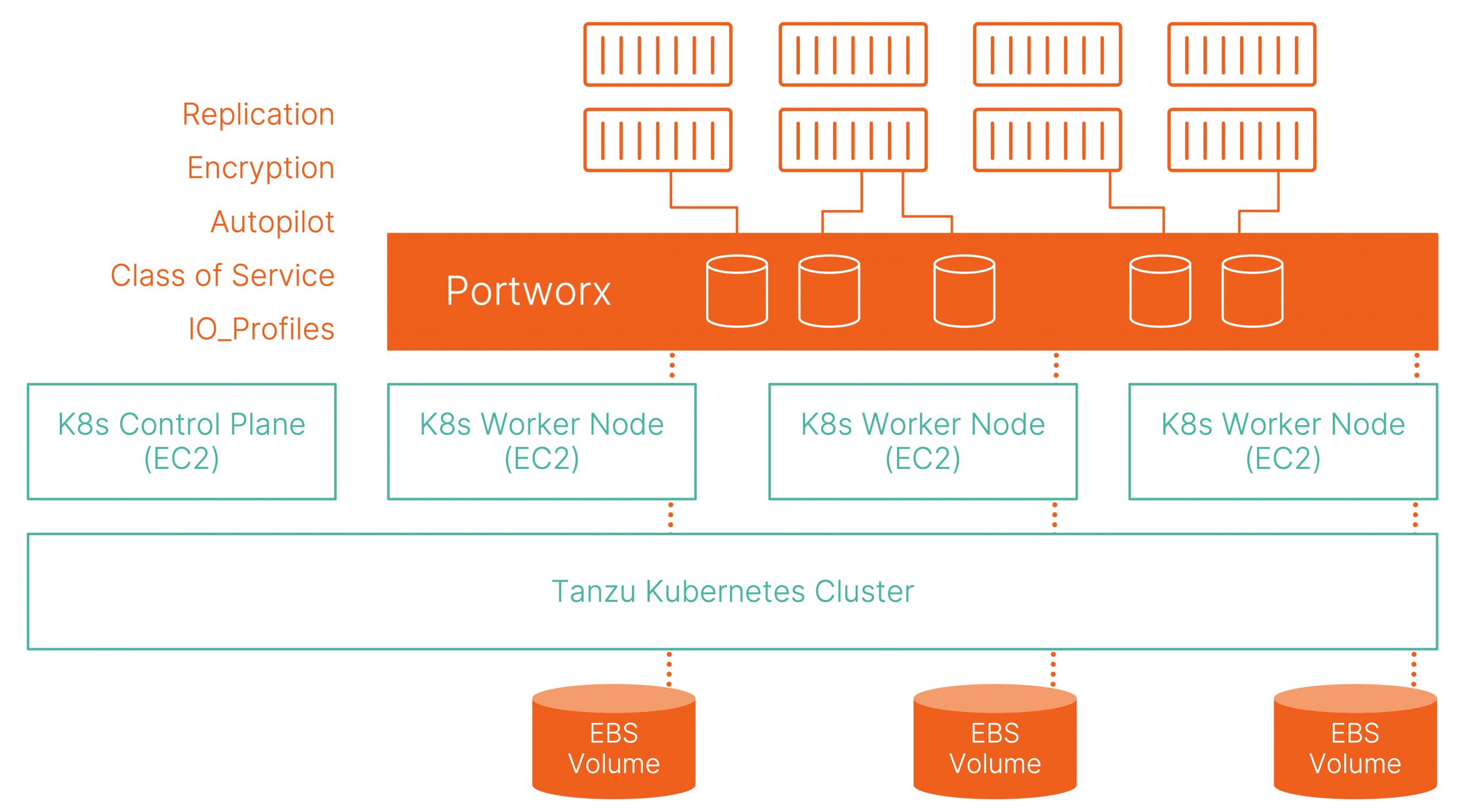

In this blog, we will discuss how you can provide cloud-native storage capabilities for your Tanzu Kubernetes clusters using Portworx. By default, Tanzu Kubernetes clusters use the AWS EBS storage class to provide persistent storage for your stateful applications. Amazon EBS was built to provide a layer of persistent storage for your EC2 instances running in AWS. But, using EBS for your Kubernetes clusters has a few limitations—like the lack of user-controlled High Availability and Replication, lack of cross Availability Zone (AZ) fault tolerance, a limit to the number of EBS volumes you can attach to a single EC2 instance, etc. If you want to learn more about this, read our previous blogs.

To ensure that we provide the best solution for customers, we recommend using Portworx as the persistent storage layer for your Tanzu Kubernetes clusters. Portworx was built from the ground up for Kubernetes and includes features like native high availability, replication, encryption, snapshots, disaster recovery, etc.

In the remainder of this blog, we will look at how you can get started with Portworx for your Tanzu Kubernetes Clusters running on Amazon EC2.

Before we deploy the Tanzu Kubernetes cluster, you will need a Tanzu Kubernetes Grid management cluster running on Amazon EC2 in either a Dev or Prod topology. Once you have your management cluster running, you can use the following command to switch context to use the management cluster:

tkg get mc kubectl config use-context <<clustername-admin@clustername>>

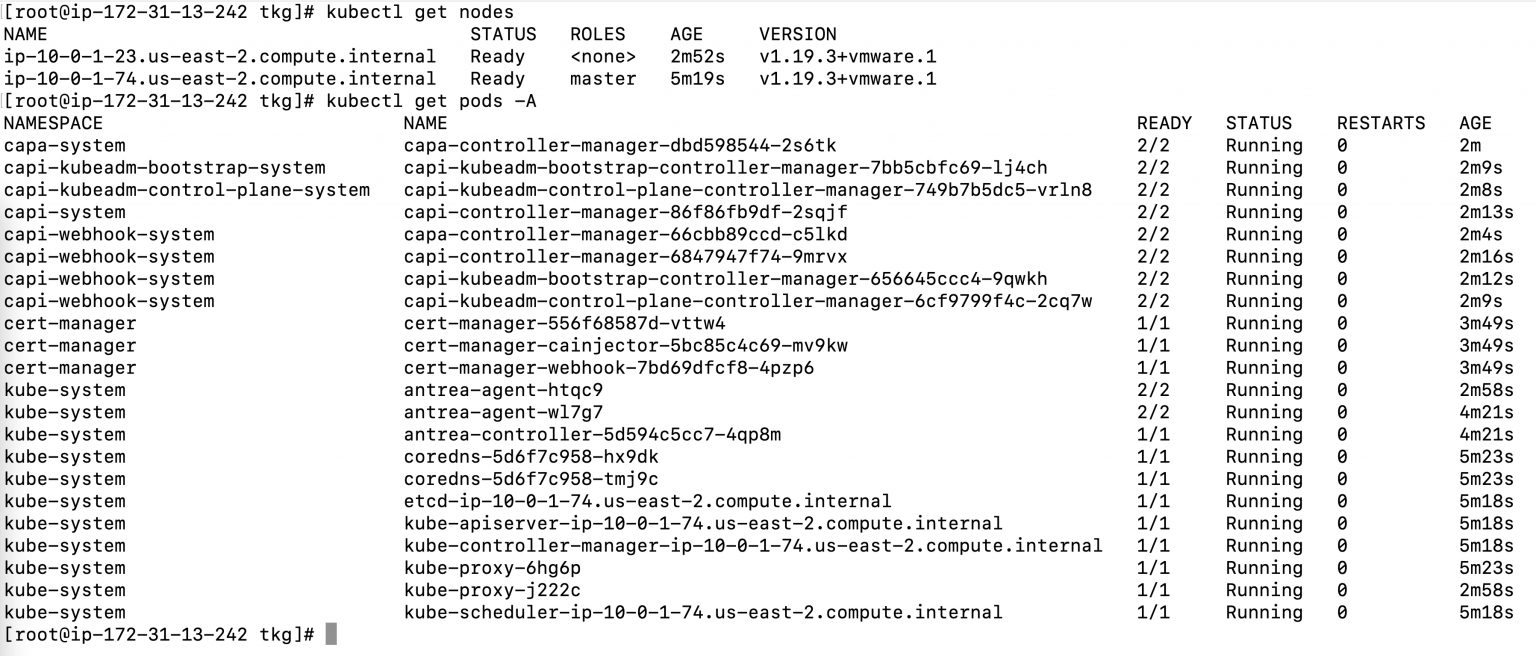

Once you are connected to the management cluster, run a couple of simple commands to confirm that everything is online and operational:

Next, let’s use the “tkg create cluster” command with the dry-run flag to generate a yaml file that can be used to deploy Tanzu Kubernetes clusters.

tkg create cluster <<name_of_the_TanzuKubernetesCluster>> --kubernetes-version=v1.19.1+vmware.2 --plan=dev -w 3 --dry-run > <<nameoffile.yaml>>

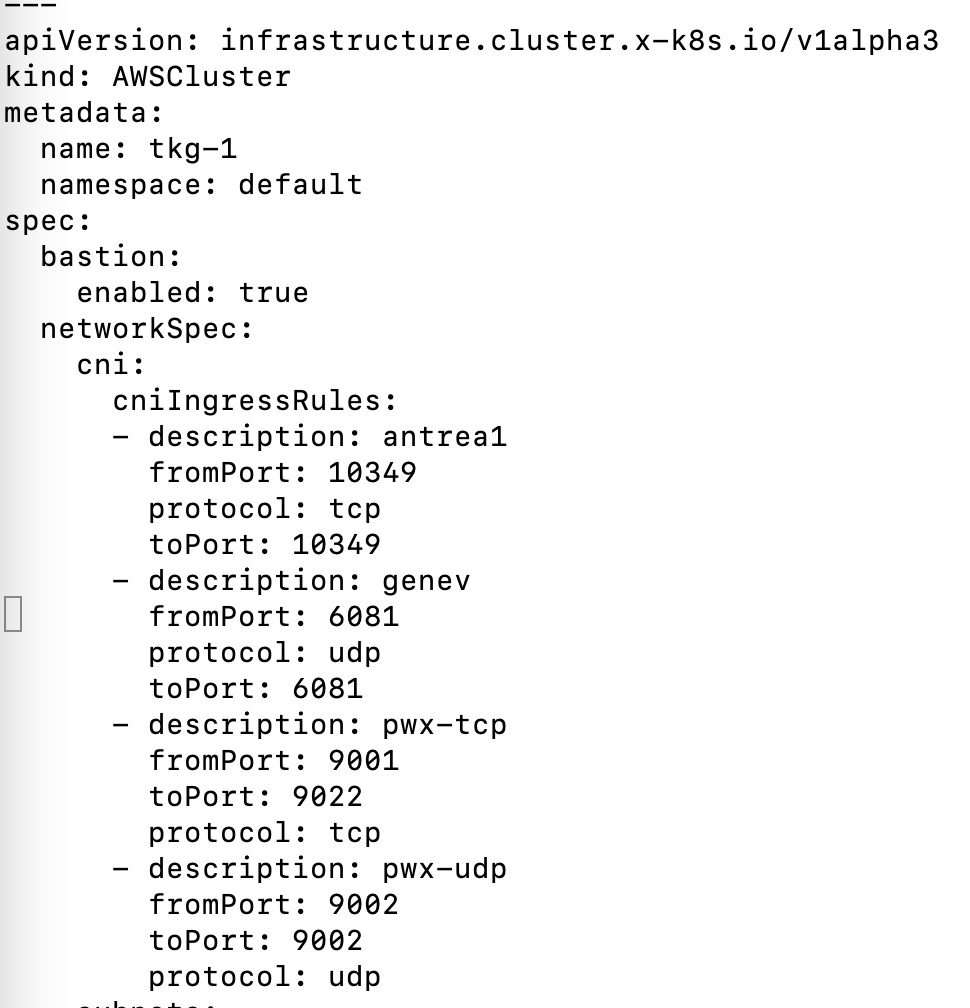

We will edit the yaml file to append a couple of inbound rules in the AWSCluster resource spec section that are needed for Portworx:

This will ensure that any worker nodes that are provisioned on Day 0 or later will have the necessary inbound ports open to participate in the Portworx storage cluster. To deploy the Tanzu Kubernetes Cluster, use the following command:

kubectl apply -f <<nameoffile.yaml>>

This will deploy all the Kubernetes and ClusterAPI resources needed for a fully functional Tanzu Kubernetes cluster. You can monitor the cluster creation by using the following commands:

tkg get clusters kubectl get machines kubectl get machinedeployment

Once your cluster is up and running, use the following two commands to log into your cluster and deploy Portworx.

tkg get credentials <<name_of_the_TanzuKubernetesCluster>> kubectl config use-context <<clustername-admin@clustername>>

Before we deploy Portworx, you need to add these IAM roles/permissions to your EC2 nodes. This will give your EC2 instances the needed permissions to provision and mount EBS volumes. Now you are ready to deploy Portworx on your Tanzu Kubernetes cluster. Here are the steps to do that:

- To generate a spec, navigate to central.portworx.com/specGen/wizard, select “Portworx Enterprise,” and click “Next.”

- Check the “Use the Portworx Operator” option. Select the version of Portworx you want to deploy. For this blog, we used the 2.6 version.

- If you have an external etcd cluster running, enter the endpoint details. If this is a test/dev environment or a smaller cluster, you can choose the built-in option as well. Click “Next.”

- We will select “Cloud -> AWS -> Enter the disk details.” When you deploy Portworx, it will automatically provision EBS volumes that match the specs here and use it to create the Portworx storage pool. You can keep the default settings and click “Next.”

- If you want, you can customize network settings or leave them as is. Click “Next.”

- In “Customize,” we will select “None,” leave everything else as is, and click “Finish.”

- Review and accept the Portworx Enterprise License Agreement.

- Now you have two simple commands that you can use to deploy a Portworx cluster in your Tanzu Kubernetes cluster.

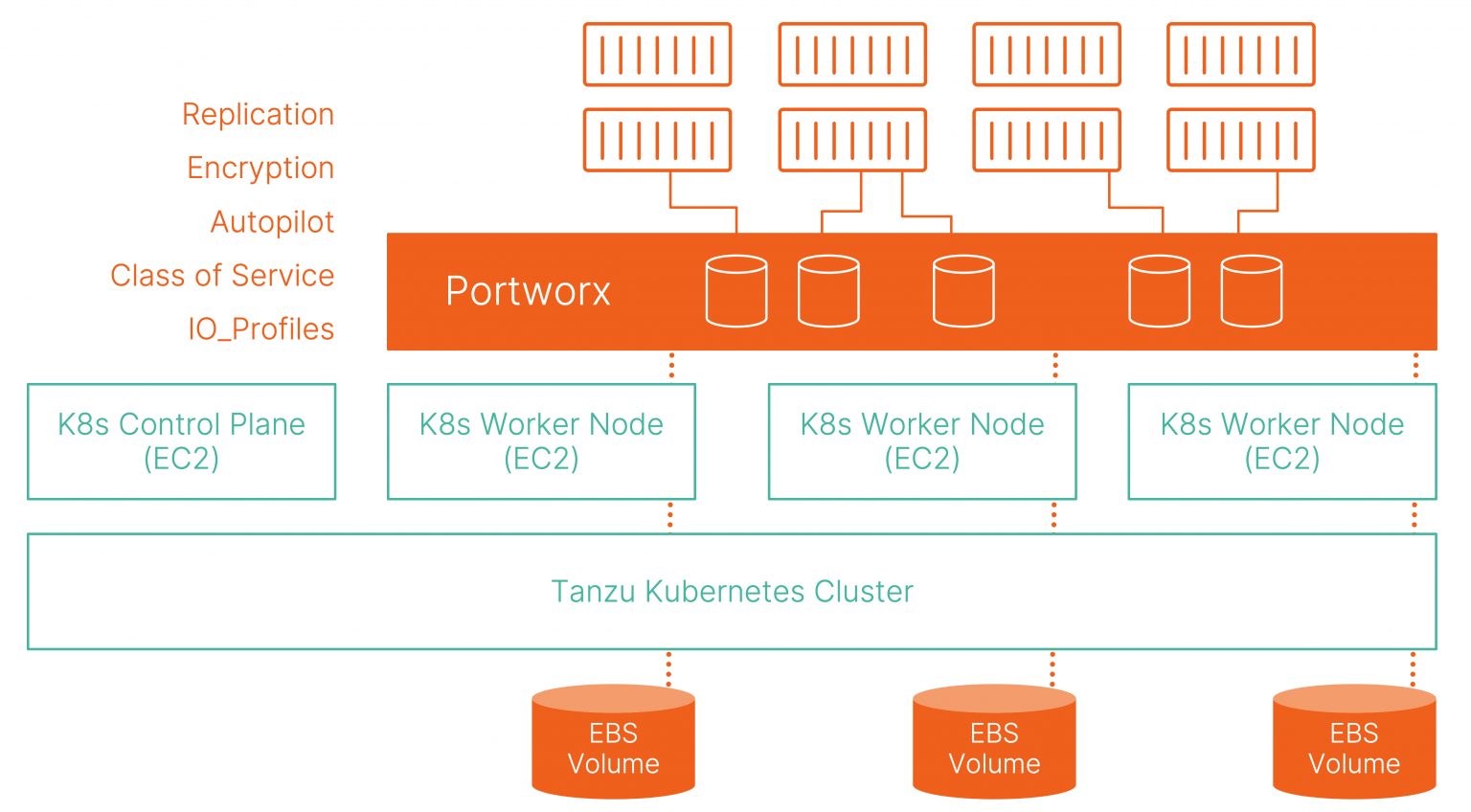

Once you have everything running as expected, you should see an environment like the image below:

If you want to scale your Tanzu Kubernetes cluster, you can use the following command:

tkg scale cluster <<name_of_the_TanzuKubernetesCluster>> --worker-machine-count 5

This will not only add two additional worker nodes to your Tanzu Kubernetes Cluster, but it will also expand your Portworx storage cluster and add more capacity to your storage pool. Since Portworx was deployed using the Operator, it will monitor your Kubernetes cluster for new worker nodes and automatically add them to the Portworx cluster as well. This is important because developers can scale their clusters—without any admin intervention—thus increasing developer velocity and reducing any delays in the application development lifecycle.

I hope this blog helps you achieve best-in-class data services for your Tanzu Kubernetes cluster using Portworx. If you prefer to watch a video walkthrough of the steps described in this blog, you will find it below:

Share

Subscribe for Updates

About Us

Portworx is the leader in cloud native storage for containers.

Thanks for subscribing!

Bhavin Shah

Sr. Technical Marketing Manager | Cloud Native BU, Pure StorageExplore Related Content:

- Amazon EBS

- kubernetes

- portworx platform

- VMware Tanzu