This is a micro-blog that is part of a series of posts regarding common errors that can occur when running Kubernetes on Azure.

Being the leader in running production stateful services using containers, Portworx has worked with customers running all kinds of apps in production. One of the most frequent errors we see from our customers are Failed Attach Volume and Failed Mount which can occur when using Azure Disk volumes with Kubernetes.

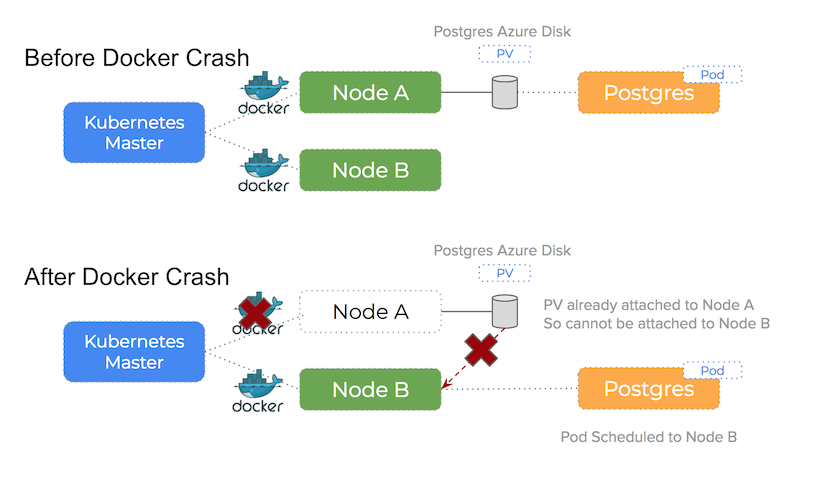

This post zooms in on errors that can happen if the Docker daemon stops or crashes. In this case, the Kubernetes master can no longer assert the health of the node and the pod will be re-scheduled to another node which can result in Failed Attach Volume and Failed Mount warnings.

Docker daemon crash or stop on Azure

What happens if the docker daemon has an issue and crashes?

Because the pods are essentially processes in their own right – the containers themselves remain running on that node. Kubernetes will however schedule new pods to alternative nodes because the Kubernetes master is no longer able to determine the containers are running on the original node. That is because without Docker able to report the status of it’s containers, Kubernetes cannot be sure that they are in fact running.

You can see how this happens in the following diagram:

A TLDR of the problem:

When an event occurs that requires a pod to rescheduled and the scheduler chooses a different node in the cluster, you will not be able to attach the Persistent Volume to a new host if Azure sees the volume already attached to a existing host

We see 90% of Azure issues using Kubernetes happen because of this issue. Because the Azure Disk volume is still attached to some other (potentially broken) host, it is unable to attach (and therefore mount) on the new host Kubernetes has scheduled the pod onto.

Error Output

By running $ sudo systemctl stop docker on the node running a pod you can trigger this problem and it will lead to the following error output using $ kubectl get ev -o wide:

$ kubectl get ev -o wide

LASTSEEN FIRSTSEEN COUNT NAME KIND SUBOBJECT TYPE REASON SOURCE

1m 1m 93 mysql-app-1465154728-m.. Pod Warning FailedAttachVolume attachdetach

Multi-Attach error for volume "pvc-e89b7de3-17fb-11e8-a49a-0022480733dc"

Volume is already exclusively attached to one node and can't be attached to another

2m 2m 1 mysql-app-3481097817-h.. Pod Warning FailedMount kubelet, k8s-agent-24653059-0

Unable to mount volumes for pod "mysql-app-3481097817-h3g7w_testrun3(0f5b919d-17fc-11e8-a49a-0022480733dc)":

timeout expired waiting for volumes to attach/mount for pod "testrun3"/"mysql-app-3481097817-h3g7w".

Portworx and cloud native storage

To understand how Portworx can help you to avoid these problems – please read the main blog-post.

In summary:

An entirely different architectural approach is taken by Portworx. When using Portworx as your Kubernetes storage driver running on Azure, this problem is solved because:

An Azure Disk volume stays attached to a node and will never be moved to another node.

Conclusion

Again, make sure you read the parent blog-post to understand how Portworx can help you to avoid these errors.

Also – checkout the other blog-posts in the Azure series:

Take Portworx for a spin today and be sure to checkout the documentation for running Portworx on Kubernetes!