Get hands-on with OpenShift + Portworx at your own pace Try it Free

In today’s Architect’s Corner, we speak with Professor David J. Malan and Kareem Zidane. David is Gordon McKay Professor of the Practice of Computer Science and professor of CS50, a popular intro course on computer science. Kareem is a software developer who over the last few months has completely rebuilt CS50 IDE, the Integrated Development Environment used by CS50 to provide an on-demand learning environment for tens of thousands of students.

Can you tell us a little about CS50 course?

David Malan: CS50 is an introductory course in computer science for majors and non-majors alike. It has roughly 800 students on campus, 100 students through an extension school, 200 students at Yale University, and to date over a million registrants through edX, where the course is freely available as a MOOC (Massive Open Online Course). And since 2007, all of the course’s materials have been freely available on a number of channels including YouTube, iTunes U, and the like, as OpenCourseWare. So we have quite a few students both on campus and off!

It was some years ago that we aspired to make the course not only a passive experience for those students, whereby they could just watch the lectures and read the materials, but also an active experience where they could actually do the course’s problem sets (i.e., programming assignments), since it’s in the doing of the problems that students learn the most.

Early on, we provided students with a downloadable virtual machine called the CS50 Appliance. This was a virtual machine image that simply required their choice of hypervisor, but there were often non-trivial technical difficulties when it came to getting that working on students’ computers, and so we gradually transitioned to a cloud-based environment, thanks to our friends in Amsterdam at Cloud9.

Cloud9 was recently acquired by AWS, and thanks to Kareem, CS50 has been transitioning the infrastructure over from the original Cloud9 topology to AWS. At that point we had both the opportunity and the challenge to implement the entire orchestration of the backend for this cloud-based infrastructure and to tackle all the challenges that arose along the way. Among the challenges was storage: how best to provide students with (and attach to any container) their own home directory in the cloud that would persist for as long as they’re with the course.

Can you talk a little bit about your roles with respect to CS50?

David Malan: Sure, I’ve been teaching the course since 2007, and I actually took the course myself back in 1996, when I was an undergrad. So I somehow came full circle.

Kareem Zidane: I’m a software developer and I have been working with CS50 for over two years now. I work on different projects that serve CS50 and other courses here on campus and online as well. In particular, I’ve been developing much of the frontend and the backend, including infrastructure orchestration most recently, of the container-based IDE environment that students use when they take CS50.

Can you talk about why you decided to use containers to provide CS50 students with an IDE?

David Malan: One of the most compelling aspects of containers, I think, is the speed with which they can be started. Indeed, one of the frustrations with the CS50 Appliance was just how long it took for it to start up, not only on students’ computers but on our own as well. And the process of building that appliance or making changes to it was tantamount to reinstalling an entire operating system. Thanks to Docker and containerization more generally, it’s a much more pleasant experience now to create an image from a Dockerfile based on a set of rules, and then to start the container within seconds and not minutes.

Kareem Zidane: That is a good summary. The thing that I would add is cost and resource effectiveness. We don’t need to run separate VMs for each student. In our case, we can just run multiple containers on the same host, have them share compute resources, and keep our EC2 costs down. Additionally, certain tasks such as starting containers, stopping them when they go idle for a while, monitoring, certain DNS configurations that we needed, etc were easier to implement and manage with a container orchestrator such as Kubernetes.

What were some of the challenges that you had to overcome in order to run the CS50 IDE in containers?

Kareem Zidane: We see about 4,000 or 5,000 students per day using our online IDE and perhaps as many as 1,000 more students on campus during the semester. So we had to think about building the system to scale to those levels.

We wanted a service that would enable us to easily start and stop Docker containers as needed. These containers would be based on an image that we develop and maintain. Ideally, this image would be cached for faster start times. Each of these containers would also need to be exposed to the internet and ideally have a fixed DNS name. We would also like to be able to persist at least one folder per container that would have a maximum size. The max size is important because obviously we didn’t want students or in some cases fraudulent users to have an infinite amount of space or to overwhelm the space we made available. Unfortunately, we couldn’t find a service that would do all of that for us so we had to build something ourselves.

Our first approach was to give each student a container running on a micro EC2 instance with an attached EBS volume for storing files and folders belonging to their assignments and projects that they needed to persist. But there were a few problems with this approach.

First of all, some users just check the CS50 IDE out once and then maybe come back months later or they might never come back in which case we would end up paying for resources that are not (actively) being used.

The other problem is that there’s a cost for the size of the provisioned EBS volume, even if the student isn’t using the full storage. So if we have 50,000 students using the IDE and we allocate 1GB each, that is 50 terabytes of EBS storage we need, even if students are only consuming a fraction of that.

Two more challenges we had with this approach were that EC2 instances and EBS volumes have to be in the same availability zone, which is limiting, and on top of that, in case we were to run multiple containers on the same EC2 instance, we could only attach up to 20 EBS volumes per host which would have limited the number of containers running on this host regardless of whether there were enough compute resources to run more containers.

Since we had these challenges with EBS, we figured “why not switch to S3?” which would perhaps be more cost-effective. One of the challenges of this approach was that there was no clean way in S3 to set a maximize storage size for each student. There was no way to say, this student is going to get this prefix in S3, but the objects under this prefix can never exceed 2GB for example. Another problem we started noticing a bit later was when a student would start their IDE, we had to download their data from S3 to the container initially and that would take some time, especially as the size of their data grows. So we had inadvertently slowed things down initially for them with this approach.

When we realized that neither a pure EBS or S3 option would work for our environment, we kept researching and that’s when we found Portworx, with which we solved the problems identified much more simply. We could have one or more EBS volumes per host even when running hundreds of containers, and we don’t have to worry about things like which availability zone these volumes are in, mounting them on the EC2 instance, formatting them and creating a file system initially, etc. Using these few EBS volumes, we could then virtualize all the storage and thinly allocate it for each student to save on costs. And finally, it allowed us to very easily backup or archive certain container volumes to S3 using Portworx CloudSnap when they are not being used.

Could you talk a little bit about the architecture of the CS50 IDE using containers and Portworx?

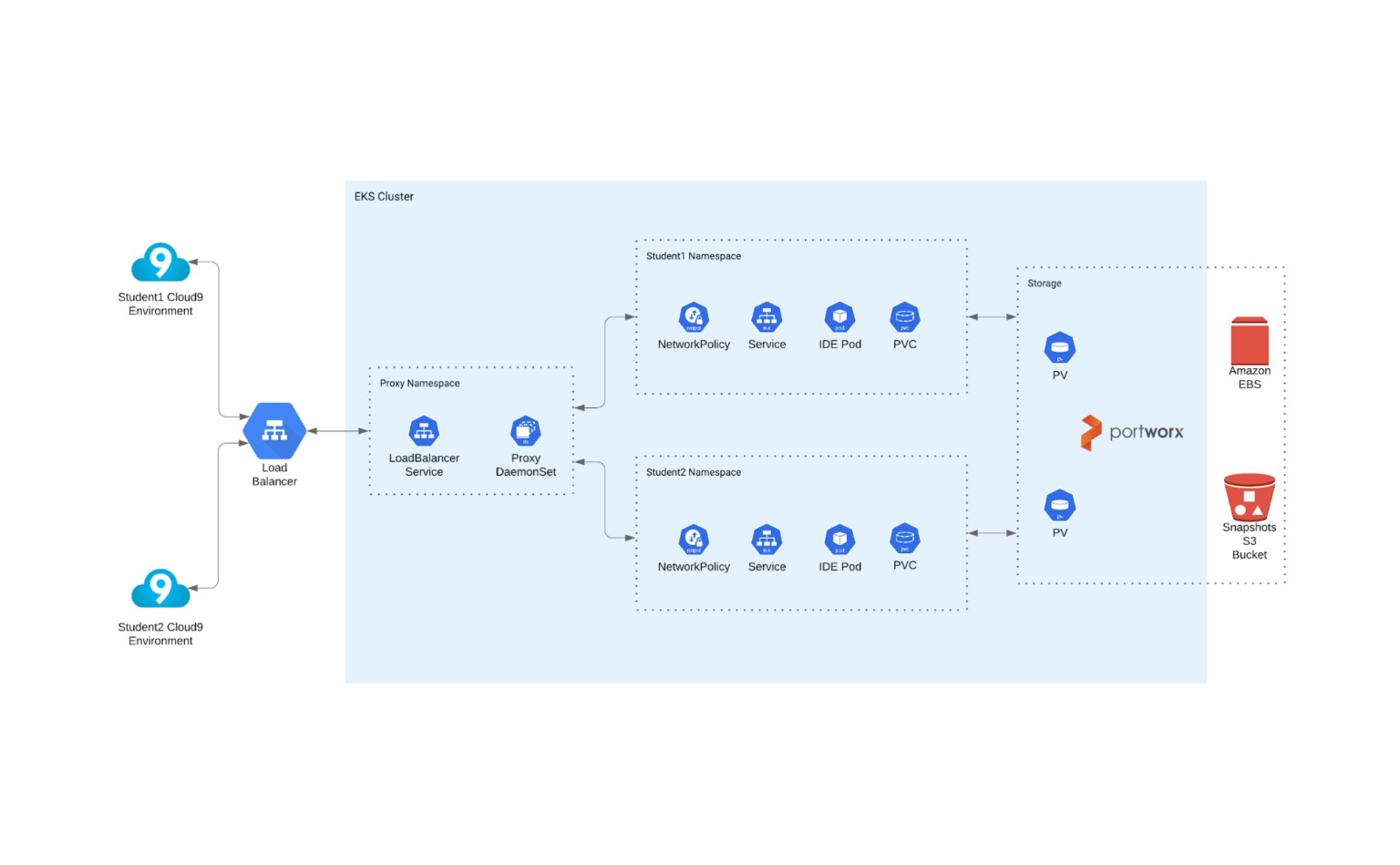

Sure. We have a Cloud9 frontend backed by an EKS cluster running on AWS where each student’s IDE is backed by a single container pod with a 1GB Portworx volume that we mount into the IDE container as its home folder to allow students to persist files.

When a student has been idle for a while, we delete the pod to recycle some of the compute resources. If they stay idle for a lot longer, we eventually recycle their storage too. We have a cron job that archives their volume to S3 using the Portworx CloudSnap feature then deletes the PVC which ends up deleting the volume. If they eventually come back, we simply create a new PVC for a volume based on the latest snapshot on S3 and re-mount it into their IDE container and the student can resume their work.

What advice would you give an architect in a similar position who’s approaching a similar problem?

David Malan: Try to avoid, when appropriate, reinventing wheels and building out solutions entirely on your own, as it’s a slippery slope time-wise, especially when the technologies involved are new. And, as much as you can, share your findings with others online. We would have loved to read about an experience like ours before diving into so many rabbit holes of implementation possibilities ourselves!

What Kareem did so wonderfully, pretty much by process of elimination, was to prototype every possible approach until we arrived at our final solution.

Share

Subscribe for Updates

About Us

Portworx is the leader in cloud native storage for containers.

Thanks for subscribing!