This blog is part of Demystifying Kubernetes for the VMware Admin, a 10-part blog series that gives VMware admins a clear roadmap into Kubernetes. The series connects familiar VMware concepts to Kubernetes—covering compute, storage, networking, security, operations, and more—so teams can modernize with confidence and plan for what comes next.

A change to Kubernetes doesn’t change your security mission. While the tools evolve, the risks remain. In this chapter, we’ll bridge proven vSphere security techniques into the cloud-native primitives required to maintain a consistent security posture across your entire stack.

You protect your VMware environment with layers. vCenter Single Sign-On authenticates users. Roles and permissions control what they do. The hypervisor isolates VMs from each other. VMware vDefend segments east-west traffic with distributed firewall rules. Every credential lives in a managed store, and compliance audits verify policies remain enforced.

Kubernetes security follows the same layered approach. Authentication verifies identity. RBAC controls access. Pod security standards enforce workload isolation. Network policies segment traffic. Secrets store credentials. The goals are identical. The mechanisms differ.

This chapter maps your vSphere security knowledge to Kubernetes security concepts.

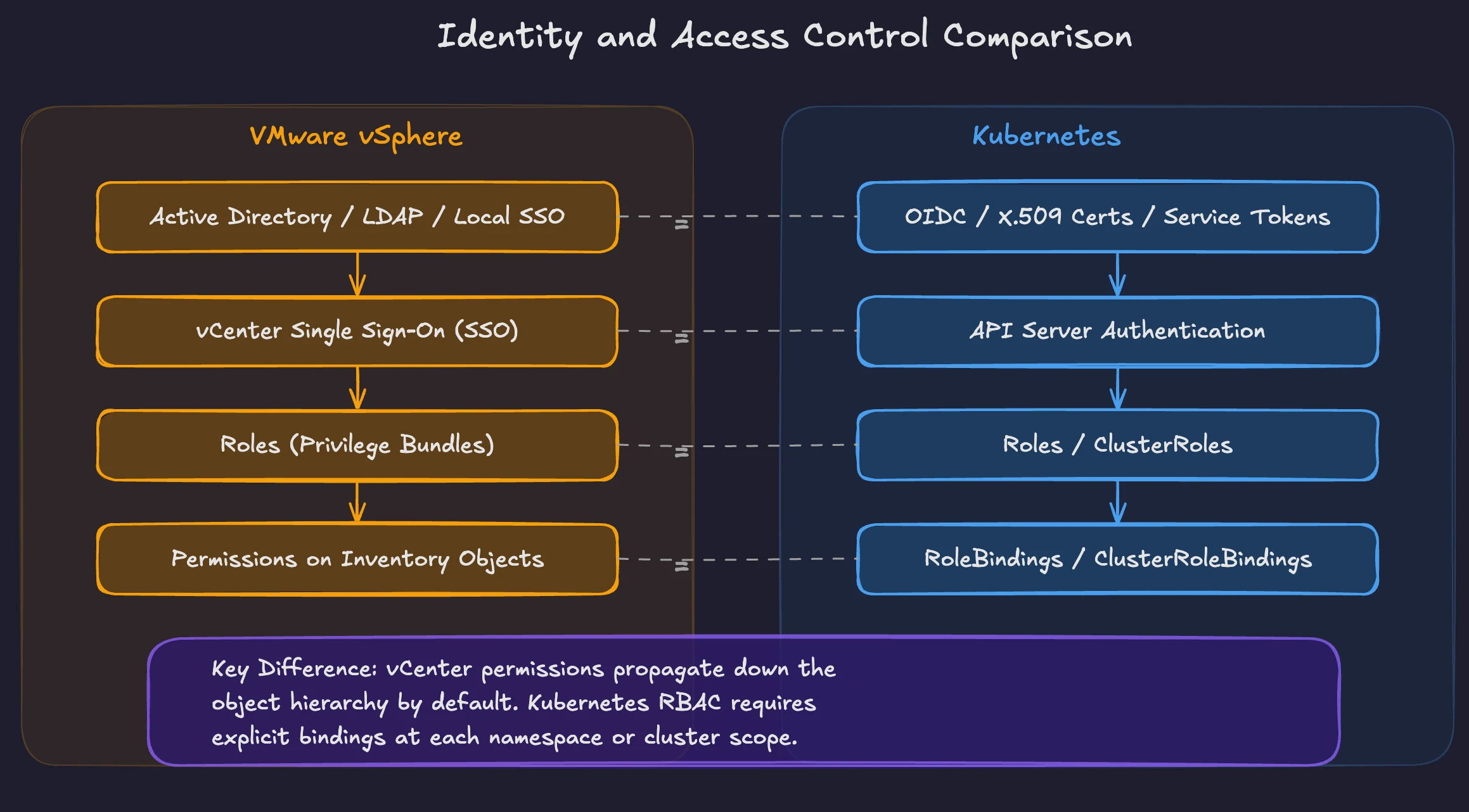

Identity and Access Control

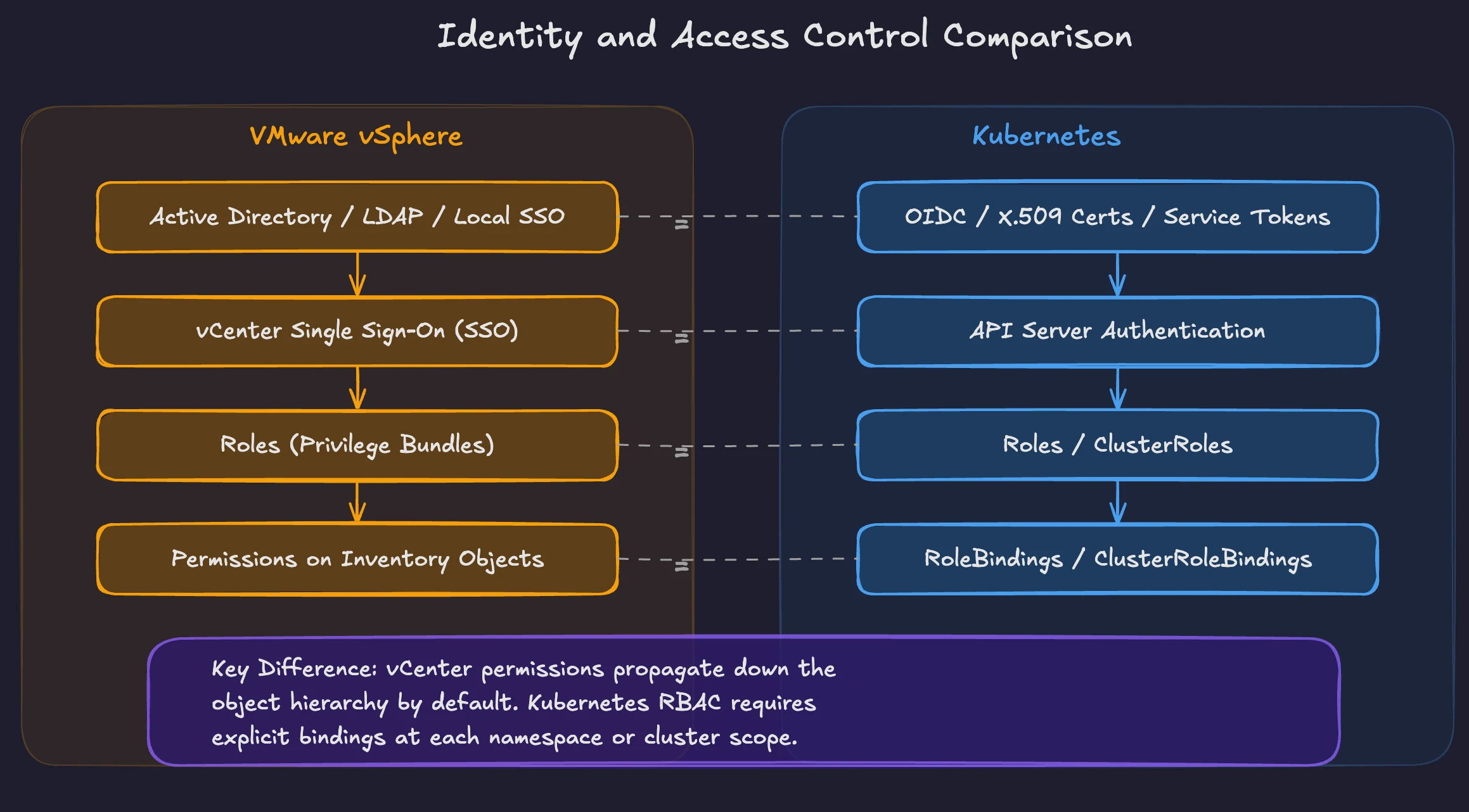

In vSphere, vCenter Single Sign-On (SSO) handles authentication. Users and groups come from Active Directory, LDAP, or the local SSO domain. Once authenticated, the permission model determines what each user can do. You assign roles to users or groups on specific objects in the vCenter inventory hierarchy. A role bundles a set of privileges. The Administrator role grants full control. The Read-Only role limits users to viewing. You create custom roles to grant specific combinations of privileges. Permissions propagate down the object hierarchy unless you override them at a lower level.

Kubernetes separates authentication from authorization. Authentication happens before any API request reaches the authorization layer. Kubernetes has no built-in user directory for human accounts. Instead, the API server trusts external identity providers for human users through X.509 client certificates, OIDC tokens from providers like Okta or Azure AD, and similar mechanisms. Kubernetes natively manages service accounts, which are first-class identities within the cluster. Pods use service accounts to authenticate with the API server and access cluster resources. The API server validates the token or certificate and extracts the identity for authorization.

Authorization uses Role-Based Access Control (RBAC). The model has four objects: Roles, ClusterRoles, RoleBindings, and ClusterRoleBindings. A Role defines permissions within a single namespace. A ClusterRole defines permissions across the entire cluster. A RoleBinding attaches a Role to a user, group, or service account within a namespace. A ClusterRoleBinding does the same at cluster scope.

The vSphere model maps to Kubernetes RBAC with a few key differences: vCenter roles apply to inventory objects such as datacenters, clusters, hosts, and VMs. Kubernetes Roles apply to API resources like pods, deployments, services, and secrets. vCenter permissions propagate through the object hierarchy by default. Kubernetes RBAC does not propagate. You must create explicit bindings at each scope.

In vSphere, a common pattern grants a team the Virtual Machine Power User role on a specific resource pool. In Kubernetes, the equivalent creates a Role with permissions to manage deployments, pods, and services within a namespace, then binds the Role to the team’s group.

Figure 1: Kubernetes identity and access control versus VMware

Both systems share a core principle: least privilege. Grant only the permissions needed for the task. In vSphere, avoid using the Administrator role where a custom role suffices. In Kubernetes, avoid the cluster-admin ClusterRole unless the use case requires full cluster access. Audit RBAC bindings regularly. The kubectl auth can-i command verifies the actions a specific user or service account is allowed to perform.

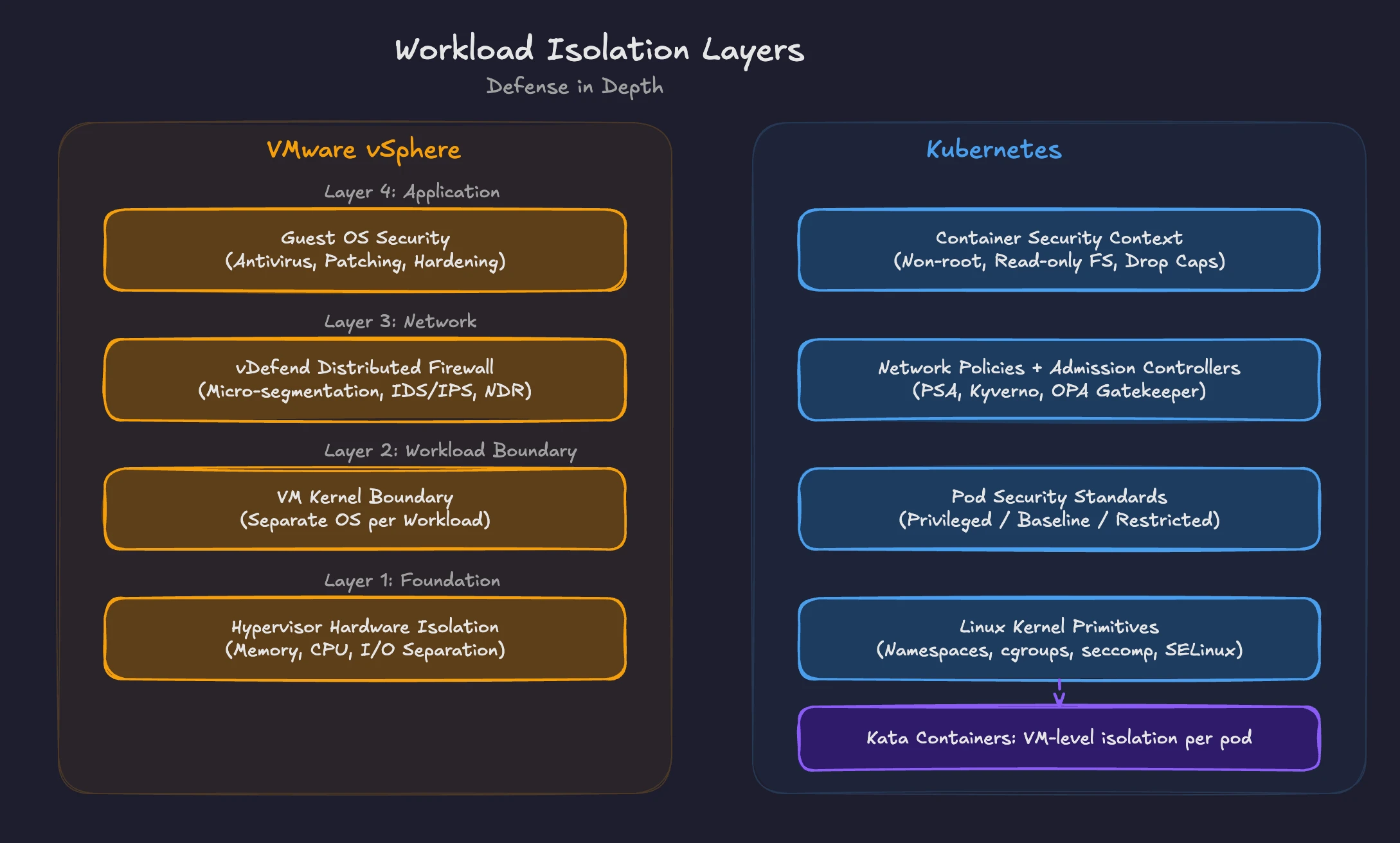

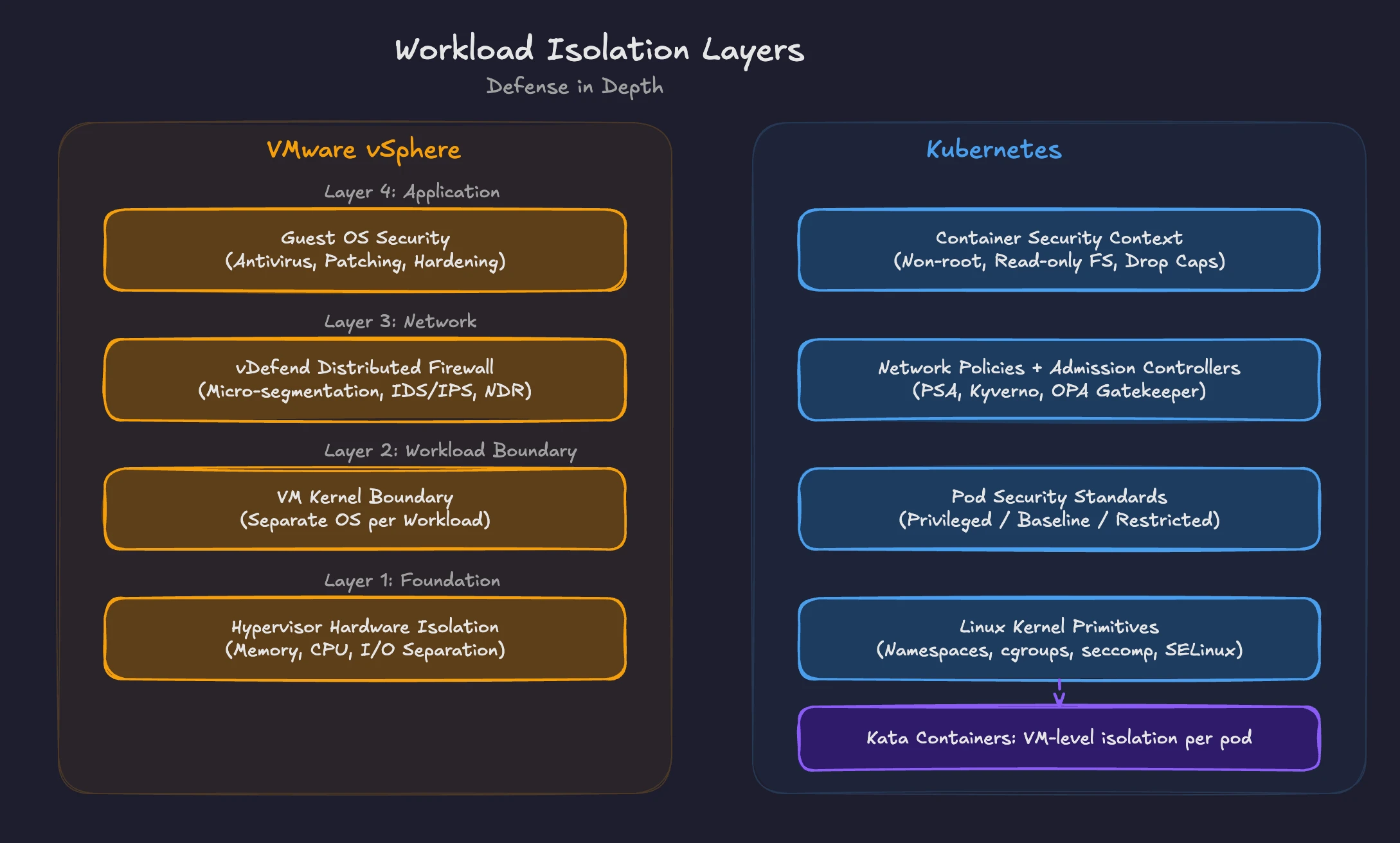

Workload Isolation

In vSphere, the hypervisor provides strong isolation between VMs. Each VM runs its own operating system kernel. The hypervisor enforces memory isolation, CPU scheduling boundaries, and separate virtual hardware. One compromised VM has no direct access to another VM’s memory or processes. VMware vDefend Distributed Firewall adds network-level micro-segmentation, controlling east-west traffic between VMs based on tags, groups, and Layer 7 rules. This combination of hypervisor isolation and network segmentation forms the security boundary for VMware workloads.

Kubernetes workload isolation operates differently. Containers within the same node share the host kernel. This shared-kernel model means that isolation depends on Linux security primitives: namespaces, cgroups, seccomp profiles, and AppArmor or SELinux policies. The isolation is strong when properly configured, but requires explicit enforcement.

Pod Security Standards (PSS) define three profiles for workload isolation. The Privileged profile imposes no restrictions. The Baseline profile blocks known privilege escalation paths. The Restricted profile enforces best practices, including running as non-root, restricting Linux capabilities, requiring seccomp profiles, and blocking privilege escalation. Many teams additionally enforce readOnlyRootFilesystem through Kyverno, Open Policy Agent Gatekeeper, or CEL policies, but this is not a built-in PSS Restricted control.

Pod Security Admission (PSA) enforces these standards. PSA is a built-in admission controller replacing the deprecated PodSecurityPolicy (removed in Kubernetes 1.25). You label namespaces with the desired enforcement level: enforce rejects non-compliant pods, warn generates warnings, and audit logs violations. A namespace labeled with the Restricted profile in enforce mode blocks any pod requesting root access or elevated privileges.

The VMware model enforces isolation at the hypervisor layer with no guest OS configuration needed. The Kubernetes model shifts the responsibility for isolation to the platform team. You define security contexts on pods, apply PSA labels to namespaces, and use admission controllers to prevent unsafe configurations from reaching the cluster.

Figure 2: Defense-in-depth with VMware vs Kubernetes

For stronger isolation, Kubernetes supports sandboxed container runtimes. gVisor interposes a user-space kernel between the container and the host kernel. Kata Containers runs each pod in a lightweight VM, providing hypervisor-level isolation similar to what VMware administrators expect. Organizations handling sensitive workloads often deploy these runtimes alongside standard containerd for workloads requiring stronger boundaries.

Admission controllers extend isolation enforcement beyond PSA. OPA Gatekeeper and Kyverno are the two leading policy engines. Both intercept API requests and validate them against policies before the objects persist in etcd. Gatekeeper uses the Rego policy language from the Open Policy Agent project. Kyverno uses Kubernetes-native YAML policies, making the tool more accessible for teams already comfortable with Kubernetes manifests. Kubernetes now includes ValidatingAdmissionPolicy (stable in v1.30), a built-in alternative using Common Expression Language (CEL), reducing the need for external policy engines in some scenarios.

A typical enforcement pipeline applies multiple layers. PSA blocks obviously unsafe pods at the namespace level. An admission controller enforces organizational policies, such as resource limits, approved container registries, and mandatory labels. Network policies segment pod-to-pod traffic. Together, these layers approximate the defense-in-depth VMware vDefend provides in the vSphere environment. Unlike vDefend, native Kubernetes NetworkPolicy is limited to L3/L4 and depends on a supporting CNI for enforcement. L7 controls, IDS/IPS, and NDR require additional tooling such as Cilium with Hubble, a service mesh, or dedicated security platforms.

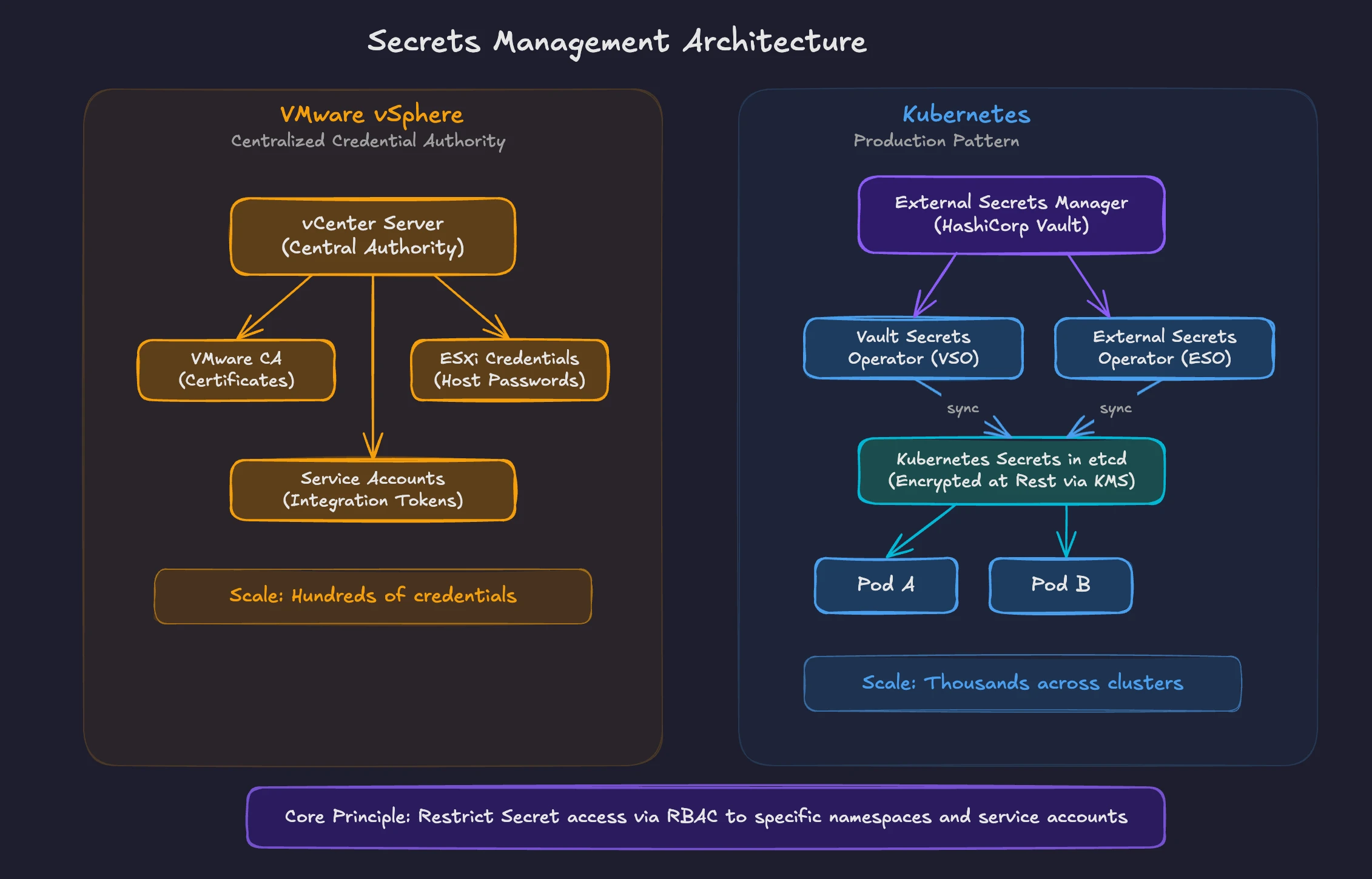

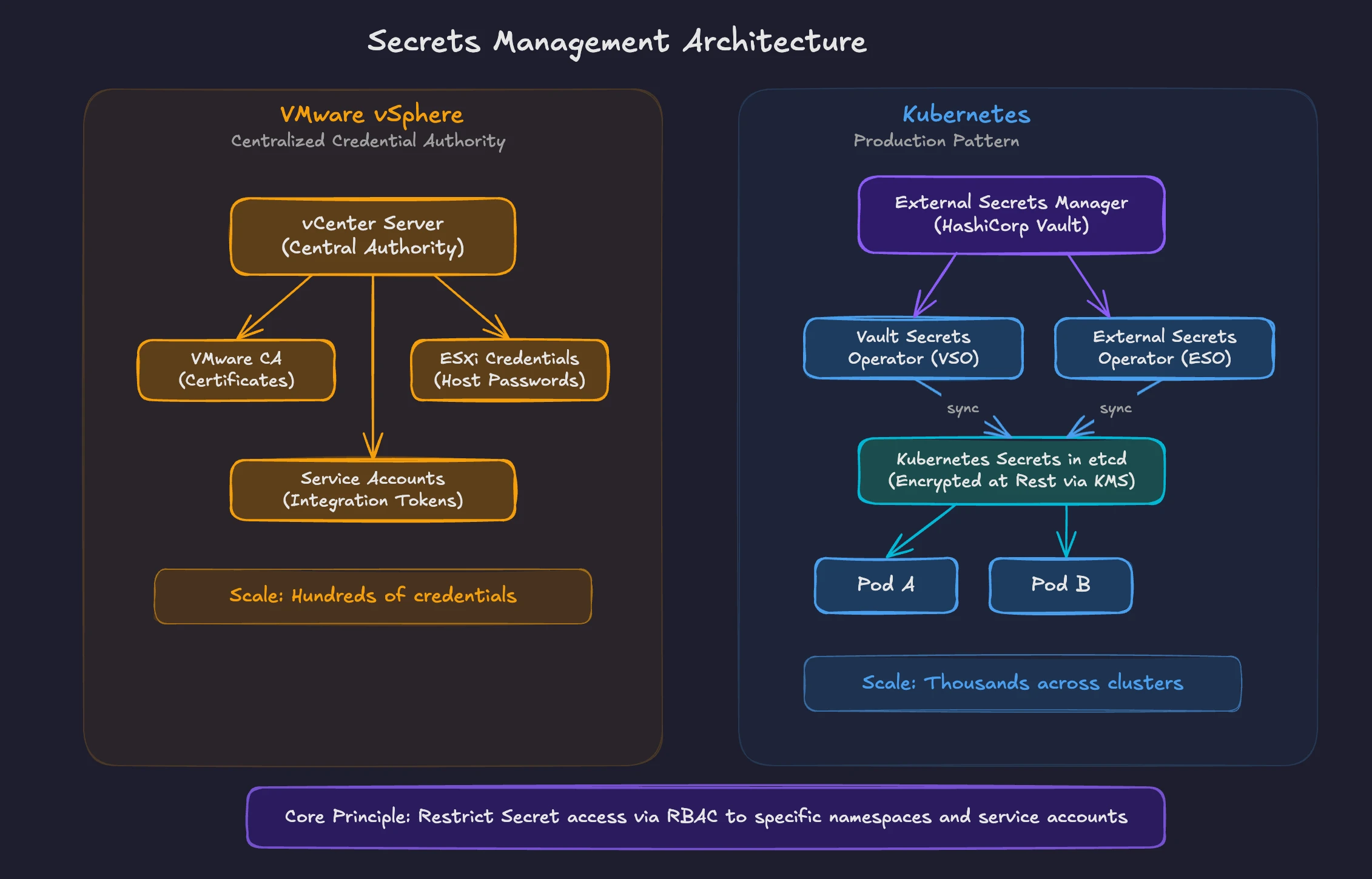

Secrets Management

In vSphere, credentials management is relatively contained. vCenter stores service account passwords, ESXi host credentials, and integration tokens. For vSphere platform components, identity and certificate trust are centralized through vCenter SSO and the VMware Certificate Authority (VMCA). Operational credentials and application secrets often reside in Active Directory, external vaults, or guest operating systems.

Kubernetes Secrets are the native mechanism for storing sensitive data like passwords, tokens, and certificates. A Secret is a Kubernetes API object stored in etcd. By default, Secrets are base64-encoded, not encrypted. This is an important distinction. Base64 encoding is not a security measure. Anyone with API access to read Secrets in a namespace sees the data in clear text after decoding.

To secure Secrets at rest, you enable encryption at the API server level. Kubernetes supports multiple encryption providers including AES-CBC, AES-GCM, and integration with external Key Management Services (KMS). With KMS integration, the encryption keys reside outside the cluster in a dedicated service like AWS KMS, Azure Key Vault, or Google Cloud KMS. The API server encrypts Secrets before writing them to etcd and decrypts them on read.

In many production environments, organizations integrate an external secrets manager with Kubernetes. HashiCorp Vault is the most widely deployed external secrets manager. The Vault Secrets Operator (VSO) syncs secrets from Vault into Kubernetes-native Secret objects. Applications can consume Kubernetes Secrets without requiring direct Vault access. The External Secrets Operator (ESO) provides the same pattern for AWS Secrets Manager, Azure Key Vault, Google Cloud Secret Manager, and other backends.

This architecture mirrors what vSphere administrators already do with credential management systems. The difference is scope. A VMware environment typically has hundreds of credentials managed through vCenter. A Kubernetes environment with microservices often manages thousands of secrets across multiple namespaces, clusters, and cloud providers. Automation through operators and dynamic secret generation becomes essential at this scale.

Both VMware and Kubernetes share a common vulnerability: overly broad access to credentials. In vSphere, granting a team full Administrator access to vCenter exposes every credential. In Kubernetes, granting read access to Secrets across all namespaces does the same. RBAC policies should restrict Secret access to the specific namespaces and service accounts requiring them.

Policy Enforcement and Compliance

VMware provides the vSphere Security Configuration Guide (formerly the Hardening Guide) for each release. This guide defines security settings administrators should configure and audit. VMware vDefend adds runtime policy enforcement through the Distributed Firewall, IDS/IPS, and Network Detection and Response (NDR). Compliance frameworks like PCI DSS, HIPAA, and SOC 2 have established audit procedures for VMware environments. VMware Cloud Foundation (VCF) Advanced Cyber Compliance, announced at VMware Explore 2025, addresses compliance automation specifically for regulated industries.

Kubernetes policy enforcement is distributed across multiple components. RBAC controls API access. PSA enforces pod security. Admission controllers validate resource configurations. Network policies segment traffic. Each component handles a specific enforcement domain.

The CNCF ecosystem provides tooling for compliance automation. Kyverno and OPA Gatekeeper enforce policies at admission time. Kyverno generates compliance reports showing which resources comply with defined policies and which violate them. Falco provides runtime security monitoring, detecting anomalous behavior in containers like unexpected process execution, file access, or network connections. Falco maps detections to the MITRE ATT&CK framework, similar to how VMware vDefend NDR correlates threats.

The Center for Internet Security (CIS) publishes Kubernetes Benchmarks defining security best practices. Tools like kube-bench automate CIS Benchmark checks against running clusters. The NSA/CISA Kubernetes Hardening Guide provides additional government-grade security recommendations.

Figure 3: Comparing secrets management in VMware with Kubernetes

A significant difference exists in the maturity of audit and compliance tooling. VMware environments have decades of established compliance tooling, third-party integrations, and auditor familiarity. Kubernetes compliance tooling is maturing rapidly, but requires more platform team effort to implement and maintain. Organizations migrating from VMware should plan for this gap and invest in building compliance automation early in their Kubernetes adoption.

The Shared Responsibility Model

In vSphere, the responsibility model is straightforward. VMware provides the platform. Your infrastructure team manages hosts, storage, networking, and security configuration. Your team owns the full stack from hypervisor to guest OS.

Kubernetes introduces a shared responsibility model varying by deployment type. With self-managed Kubernetes (kubeadm, Kubespray), your team owns everything: control plane, worker nodes, networking, storage, and security configuration. With managed Kubernetes (EKS, AKS, GKE, and managed OpenShift offerings such as ROSA, ARO, and OpenShift Dedicated), the cloud provider or platform vendor manages the control plane. Your team manages worker node configuration, workload security, network policies, RBAC, and secrets management.

This split responsibility creates confusion for teams transitioning from VMware. In vSphere, you own the hypervisor and everything above the hardware layer. In managed Kubernetes, the provider owns the control plane, but you still own security configuration for namespaces, RBAC, pod security, network policies, and secrets. The provider does not enforce least-privilege RBAC for your workloads. The provider does not create network policies for your namespaces. The provider does not manage your secrets lifecycle.

VMware administrators moving to Kubernetes should map their existing security responsibilities to the Kubernetes model early in the transition. Define who owns RBAC policy creation and review. Define who enforces pod security standards. Define who manages the secrets lifecycle and rotation. Define who monitors for compliance violations and runtime threats. These responsibilities do not disappear. They are redistributed across platform and application teams in ways that require explicit documentation.

Your vSphere security knowledge transfers directly to Kubernetes. vCenter SSO maps to API server authentication with external identity providers and native service accounts. Roles and permissions map to RBAC with Roles, ClusterRoles, and their bindings. Hypervisor isolation and vDefend micro-segmentation map to Pod Security Standards, admission controllers, network policies, and sandboxed runtimes like Kata Containers. Credential management through vCenter maps to Kubernetes Secrets backed by external managers like HashiCorp Vault. The vSphere Security Configuration Guide and compliance automation map to CIS Benchmarks, Kyverno policy reports, and Falco runtime detection. The principles of least privilege, defense in depth, and compliance enforcement all apply. The implementations change. The discipline does not.

In the Chapter 8, Day 2 Operations Lifecycle Management and Observability, you will learn how vRealize Operations and centralized logging translate to Prometheus, Grafana, and Kubernetes-native observability. You will see how backup, disaster recovery, and cluster lifecycle management work in the cloud-native model.

Demystifying Kubernetes – Blog series