We have seen an increasing number of organizations adopting Kubernetes for their production workloads, especially for running stateful applications. By choosing Kubernetes as the platform to run stateful applications, organizations can scale their applications faster, allowing their developers to be more efficient while also reducing their IT budget by 36%. According to a recent survey conducted by Pure Storage and Wakefield Research, 87% of the respondents expect the percentage of stateful workloads to increase over the next 12 months. Portworx understands this requirement and is continuously working on enhancing its storage layer so organizations can get the biggest bang for their buck when they use Kubernetes with Portworx.

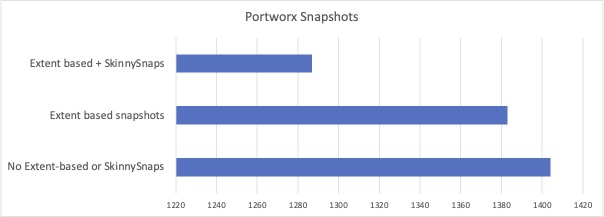

In this blog, we will talk about how Portworx has adapted the way we store snapshots so organizations can reduce their storage consumption. We will talk about extent-based snapshots and SkinnySnaps and how these help administrators save on storage costs. We will use a simple application for our discussion, which has 10 persistent volumes that have a size of 100GB each. Portworx allows you to store three replicas for these volumes on your Kubernetes cluster.

Extent-based snapshots

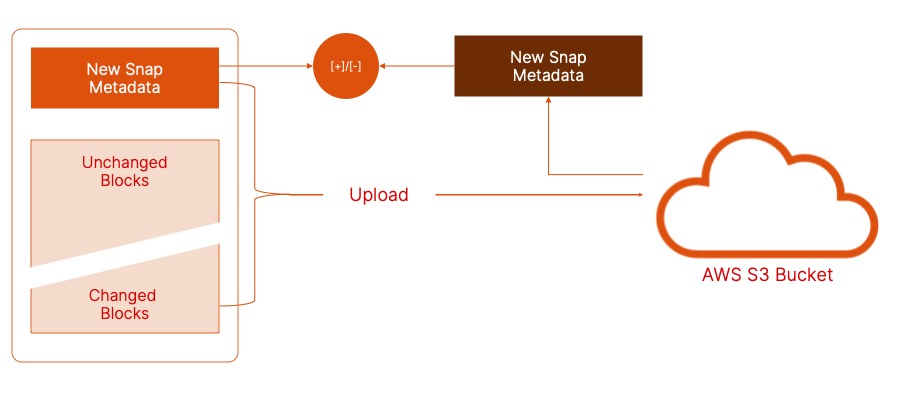

Portworx has long supported taking cloudsnaps of your persistent volumes and offloading them to a cloud object storage repository for longer-term retention. cloudsnaps are incremental in nature, so you are not copying unchanged blocks to your backup repository. This reduces the amount of storage consumed in the backup repository and the amount of network bandwidth used to transfer these blocks.

Portworx supports two methods for storing and comparing cloudsnaps: local snapshots and extent-based snapshots. If you choose local snapshots, Portworx will store the most recent snapshot locally on your Kubernetes cluster for all the persistent volumes and use this snapshot to calculate the difference between this and the next snapshot and only upload the changed blocks to the backup repository. This implies that for every persistent volume, you are storing a local snapshot. This local snapshot can account for additional storage, based on the amount of data being overwritten. But, with Portworx 2.8 release, extent-based snapshot is the default method for comparing and storing cloudsnaps. Extents are nothing but block metadata, which are used to determine the difference between the latest snapshot and the previously uploaded cloudsnap. These extents are also stored in the backup repository and are pulled down for comparison. When taking extent-based snapshots, Portworx uses the following steps:

- Takes a persistent volume snapshot and calculates the extent.

- Downloads extent data for the previous snapshot from the cloud.

- Compares extents, looking for changed blocks or generations.

- Uploads the changes and new extent to the backup repository.

- Deletes the local snapshot.

By doing this, we save on the amount of local storage needed. In our example, this means we don’t need to store and pay for that additional storage needed to calculate the difference between our latest and previous snapshots. To verify that extent-based snapshots are enabled on your Portworx cluster or to enable them on your Portworx cluster, you can use the following commands:

#Verify cloudsnap-using-metadata-enabled is set to on pxctl cluster options list pxctl cluster options update --cloudsnap-using-metadata-enabled on

SkinnySnaps

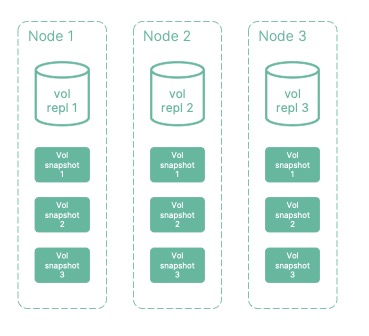

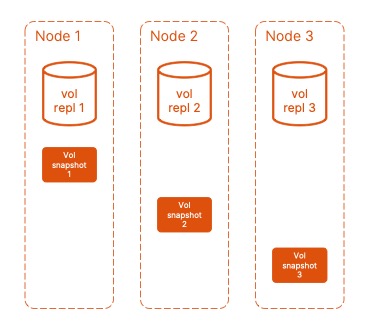

Portworx SkinnySnaps provides you with a mechanism for controlling how your cluster takes and stores volume snapshots. This was another key enhancement that was introduced in the Portworx 2.8 release. Prior to 2.8, if you had a persistent volume with three replicas configured on your Portworx storage cluster, any snapshots of that persistent volume would store the same number of replicas for each snapshot version. In our example, that means for 10 Persistent volumes with three replicas each and a snapshot retention policy of 3, Portworx would store 90 incremental snapshot copies locally, which meant additional storage by all 3 replicas for each snapshots on your Portworx storage cluster.

We realized that this wasn’t the most efficient use of the available storage capacity, so we introduced SkinnySnaps, and beginning with the Portworx 2.8 release, users can enable Skinnysnaps through CLI. Starting with the 2.9 release, SkinnySnaps will be the default way for creating local snapshots for cloudsnaps. With SkinnySnaps, you can configure the number of replicas (less than the persistent volume replicas) you want to store for your snapshots. You can choose to store just one replica of each snapshot. This allows you to optimize the amount of storage you use on your Kubernetes cluster while still being able to roll back to a previous version for your persistent volume.

There is one tradeoff for using SkinnySnaps: If you lose the node that is storing a specific snapshot version, you might not be able to roll back to it. You can work around this by storing two replicas for your snapshots instead of one. To verify that the SkinnySnaps feature is enabled and to configure the number of replicas stored for SkinnySnaps, you can use the following commands:

#Verify SkinnySnaps is enabled pxctl cluster options list #Enable SkinnySnaps pxctl cluster options update --skinnysnap on #Configure SkinnySnaps replica count pxctl cluster options update --skinnysnap-num-repls 1

By combining both features, organizations can see increased savings in the amount of storage consumed by their applications running on Kubernetes.

In addition to extent-based snapshots and SkinnySnaps, Portworx also added support for cloudsnaps throttling in the 2.8.1 release and added support for batch delete operations for cloudsnaps in the 2.9 release. With batch delete, Portworx will issue one single API request to delete all the objects (upto 1000 objects) that belong to a single cloudsnap, rather than creating individual API requests for each object that belongs to a cloudsnap. This helps increase the per-request overhead efficiency for delete operations while also reducing the number of requests submitted for deleting a cloudsnap.

If you want to look at how you can get started with extent-based snapshots and SkinnySnaps and look at the difference in storage utilization, watch this demo that we posted on youtube.

Share

Subscribe for Updates

About Us

Portworx is the leader in cloud native storage for containers.

Thanks for subscribing!

Bhavin Shah

Sr. Technical Marketing Manager | Cloud Native BU, Pure StorageExplore Related Content:

- kubernetes

- portworx

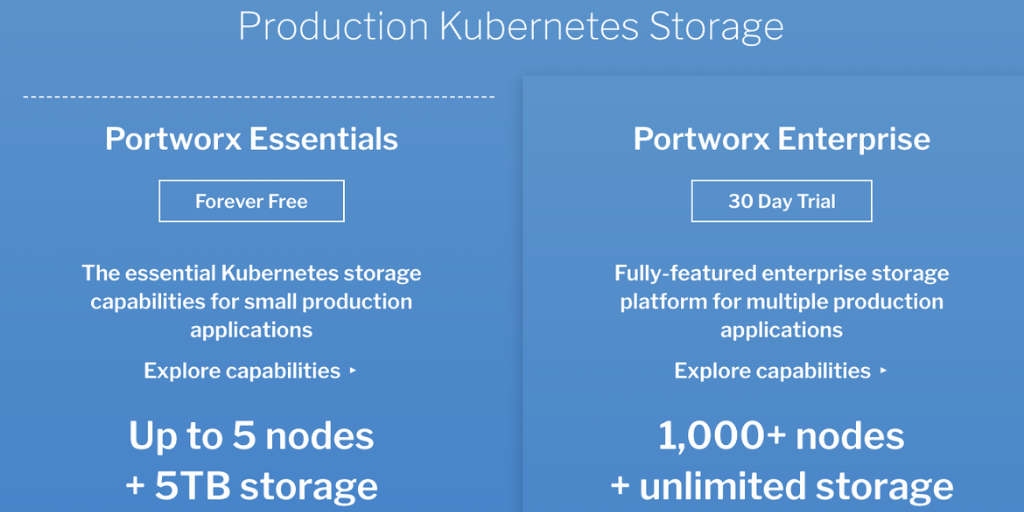

Announcing Portworx Essentials: The #1 Kubernetes storage platform for any app